Akto Partners with Portkey to Bring Guardrails to AI Gateway

Teams are no longer just experimenting with LLMs. They’re shipping AI agents, MCP-connected workflows, and production GenAI features that call real tools, access internal systems, and move data across multiple layers.

To manage that complexity, many engineering teams are putting AI gateways in front of their models to handle routing, fallbacks, logging, guardrails, caching, and provider management across OpenAI, Anthropic, open-source models, and more.

But once your gateway becomes the control plane for agent traffic, it also becomes the best place to answer critical security questions:

- Which agent flows are hitting which models?

- What data is being sent in prompts and responses?

- Which tool calls or MCP interactions expose risk?

- Where should security testing happen without slowing developers down?

That’s exactly what the new Akto + Portkey partnership enables.

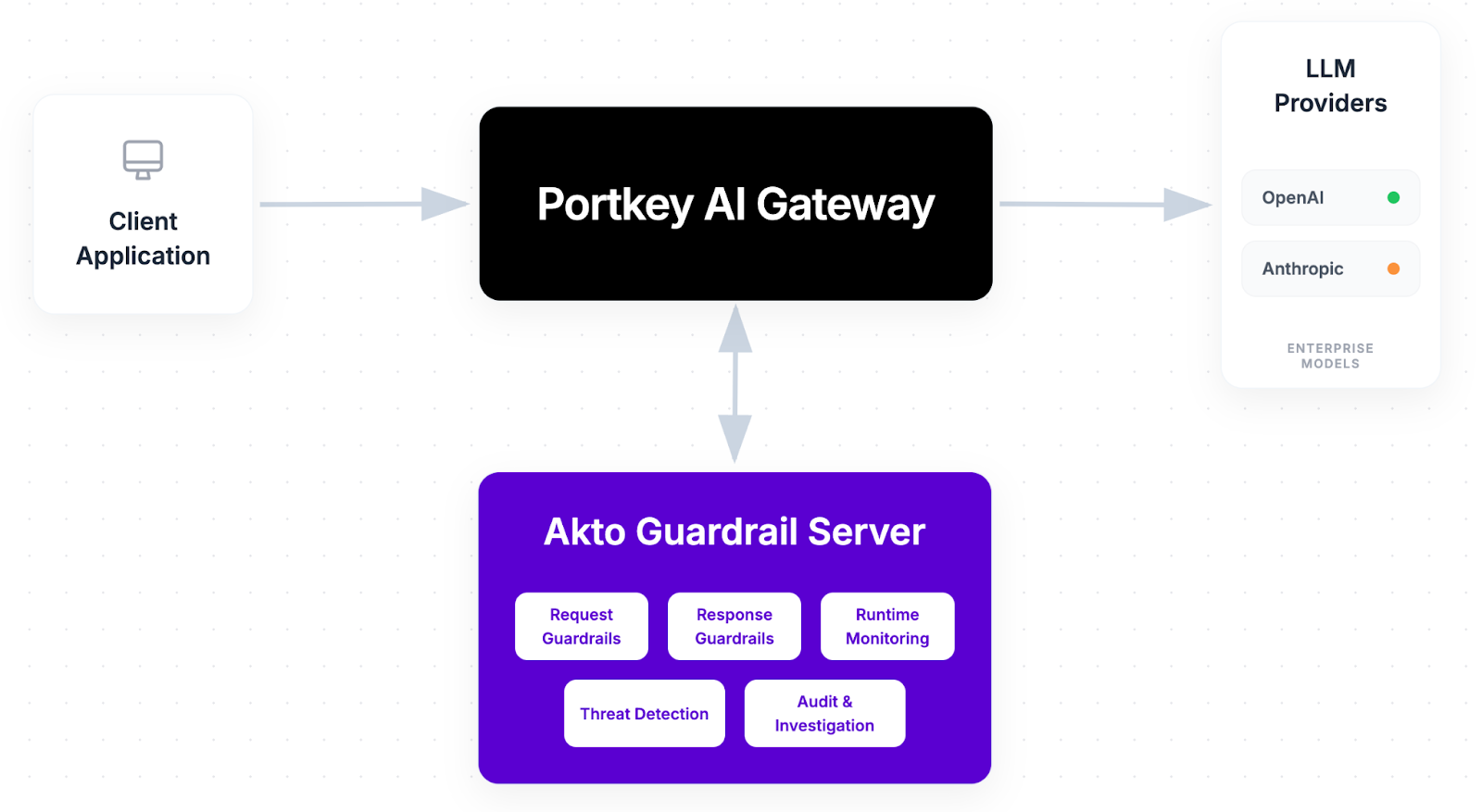

Two Platforms, One Unified Layer of AI Gateway + Security

Akto is the security platform for AI Agents, MCPs, and LLMs built or used by teams across enterprises. Akto provides visibility, risk assessments, and enforces guardrails to block prompt injection, jailbreaks, data exposure, and any malicious action. The platform gives controls to security and engineering teams over AI agents.

Together, Portkey's AI Gateway and Akto give teams a unified layer for AI traffic management and security enforcement.

Security Configured Once, Enforced Everywhere

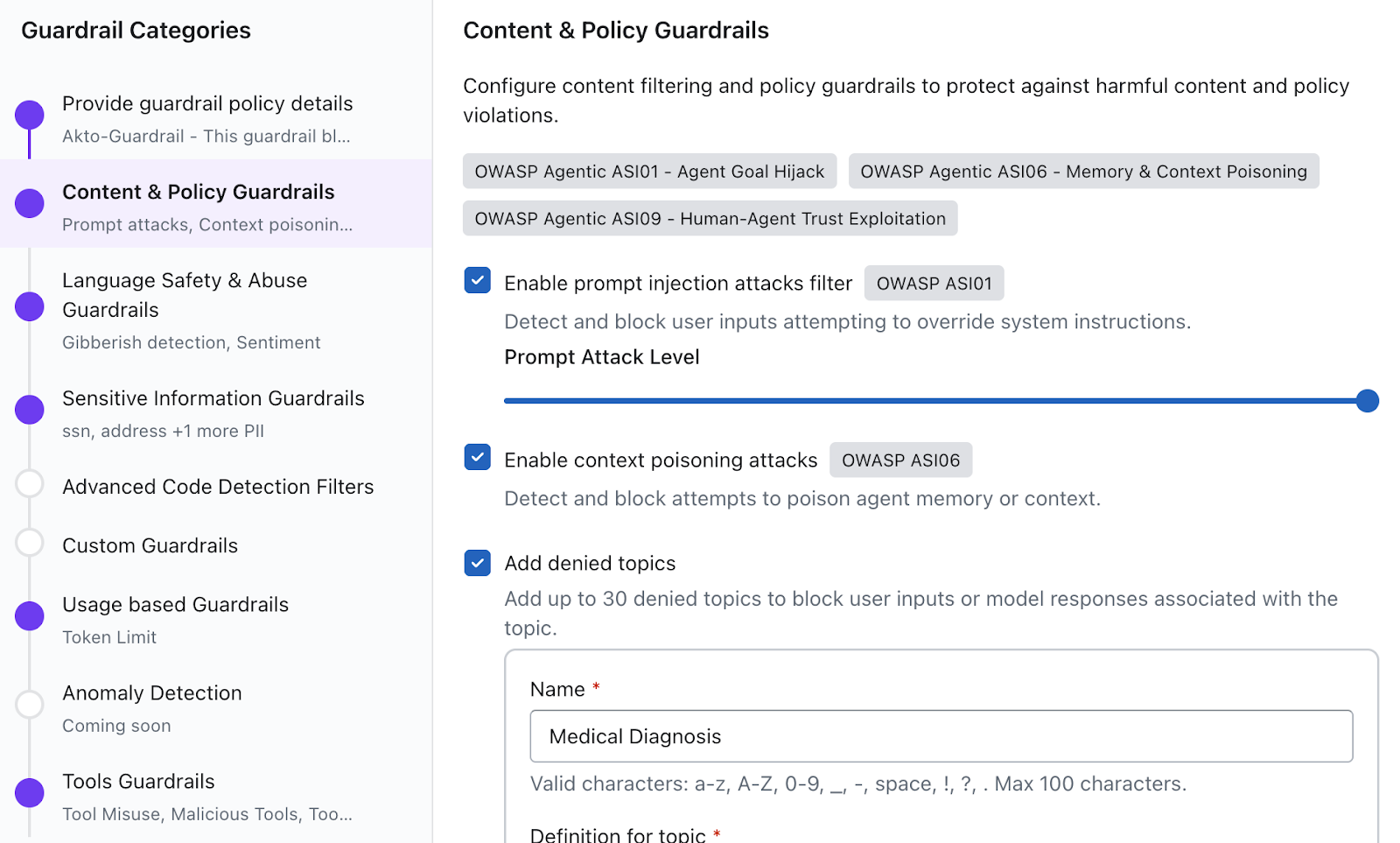

Akto is the native guardrail layer inside Portkey, configurable in minutes through the Guardrails module. Define your policies once at the gateway level, and every application routing through Portkey picks them up automatically. No additional code needed per application.

Together, the partnership means security is no longer bolted on after the fact. It's built into the gateway itself.

Real-Time Inspection on Every Prompt and Response

Once set up, Akto Agentic AI Security inspects every prompt and response flowing through Portkey in real time:

- Prompt injection and jailbreak attempts are detected and blocked before reaching your model

- Sensitive data, including PII, credentials, and internal context, is identified and redacted in both inputs and outputs

- Harmful or policy-violating model responses are caught before they reach end users

- Agentic AI workflows are covered by Akto Argus, which monitors threats across multi-step agent executions, not just individual LLM calls

Security teams get full visibility through Akto's dashboard. Engineering teams continue monitoring through Portkey's existing logs. No one has to change how they work.

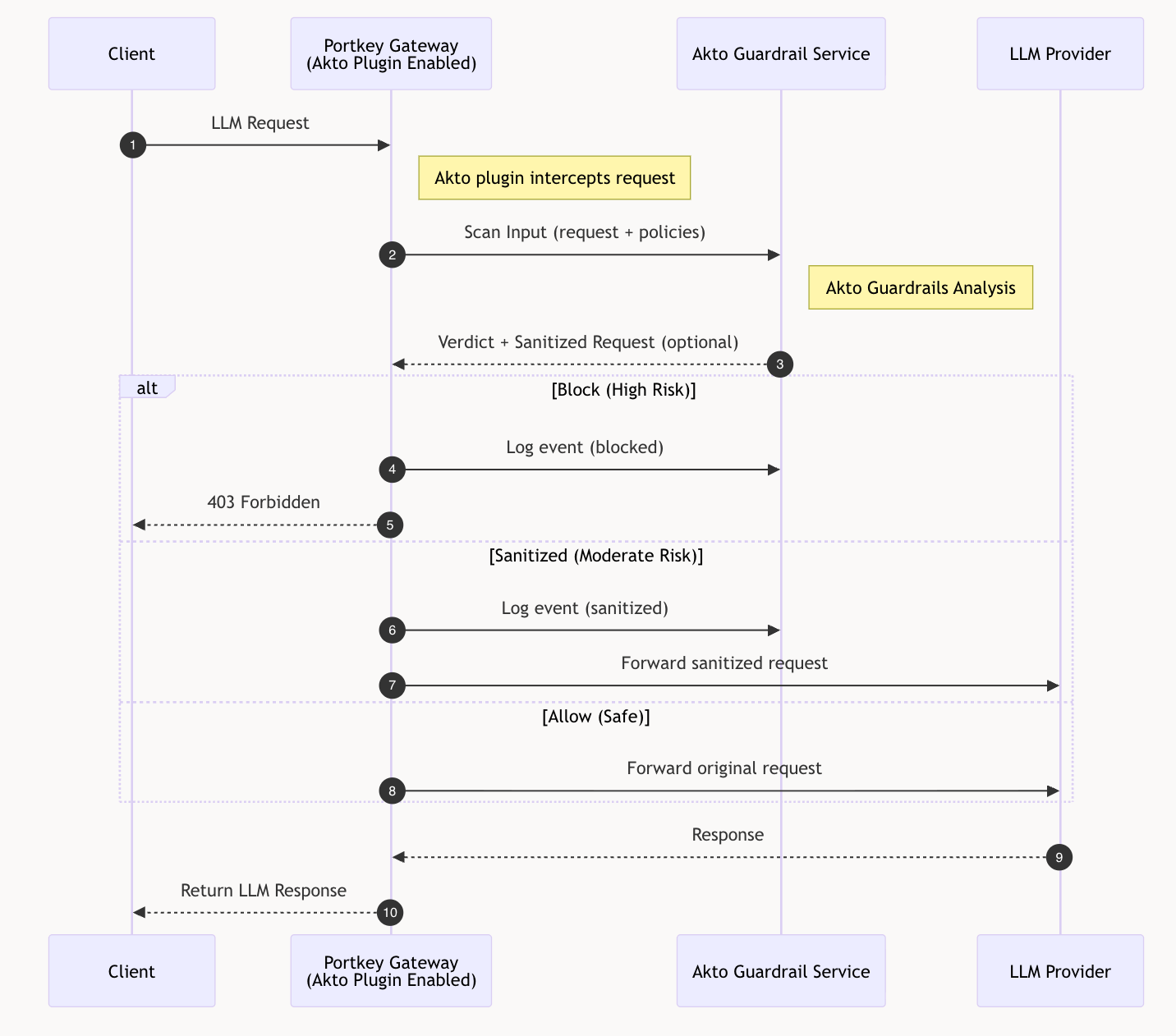

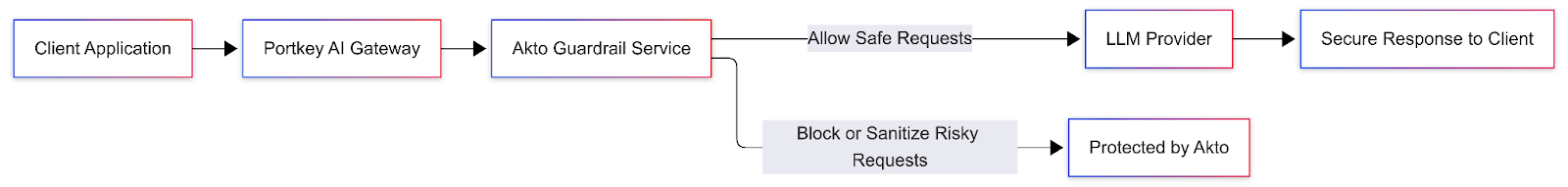

How It Works: Portkey + Akto Guardrails

Setting up the integration takes minutes. Within Portkey's guardrails configuration, teams can add Akto as a provider and define when and how Akto's checks run on input, on output, or both.

Once live, Portkey routes each LLM call through Akto's inspection pipeline.

Akto AI Agent guardrails that can run through Portkey

Akto applies runtime guardrails to the traffic flowing through the gateway, helping teams enforce policy before requests reach the model and before responses reach the application.

These guardrails can include:

- Prompt injection protection to detect and stop prompt injection and jailbreak attempts

- Sensitive data protection to identify and redact PII, credentials, secrets, tokens, and custom regex-based patterns

- Harmful content filtering to block unsafe or disallowed categories in prompts or responses

- Denied topics to prevent restricted or regulated use cases from being handled by the model

- Custom word and phrase filters based on internal policy or business-specific requirements

- Intent-aware checks that help ensure agent behavior stays within expected scope, not just text-based input/output rules

For agentic systems, Akto extends beyond prompt and response inspection. Its runtime guardrails are built to evaluate tool invocations, MCP requests, and agent actions, not just standalone LLM calls. Akto’s AgentGuard is designed as a runtime enforcement layer for AI agents, MCPs, tools, and LLM interactions, enabling policy checks before actions execute.

What happens after a guardrail check

Based on the AI Guardrail result, Portkey can take different actions:

- Allow the request or response to continue

- Block the request and return a fallback response

- Redact sensitive fields before data reaches the model or downstream application

This gives teams full flexibility from a hard block for high-severity threats, soft logging for lower-risk signals, and everything in between.

One layer for traffic control and security, org-wide

For Akto customers, this partnership means you can apply your security policies consistently across all AI traffic, every internal application, every customer-facing agent, every model provider, through a single integration point. No additional proxies. No per-application work.

For Portkey customers, this partnership makes it easier to add production-grade AI security directly at the gateway layer, without introducing another proxy, custom middleware, or per-application security logic.

For teams new to both platforms, this is what production AI infrastructure looks like when security and governance are built in from the start: one layer that handles routing, reliability, observability, and protection across your entire AI footprint.

Built for Teams Shipping AI Agents in Production

This partnership reflects a shared belief from both teams: security and developer velocity should not be at odds.

As teams move from simple LLM wrappers to AI agents, MCP-connected workflows, and multi-model production systems, security needs to live in the same layer where traffic is already being routed and controlled.

That’s why this partnership matters. By bringing Akto directly into Portkey’s gateway layer, teams can secure real AI systems, not just isolated model calls, without changing how they build.

Getting started takes minutes. Check out the Akto connector docs for Portkey and Portkey's Akto guardrail documentation to get up and running.