Who owns Claude Code at your company? A platform team's guide to managing coding agents at scale

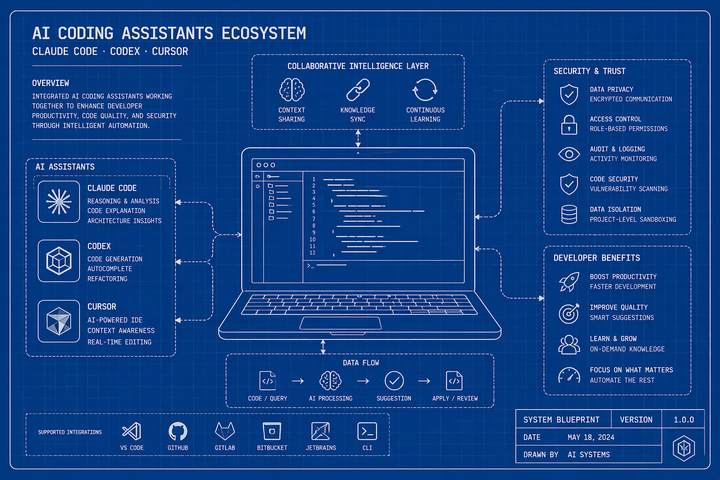

The harness is converging. Context is what separates teams. A platform team's guide to owning Claude Code, Cursor, and Codex across your engineering org.