The best approach to compare LLM outputs

Once LLMs are in production, output quality stops being a subjective question and becomes an operational one. Teams are no longer asking whether they need to evaluate outputs, but how to do it reliably as systems evolve.

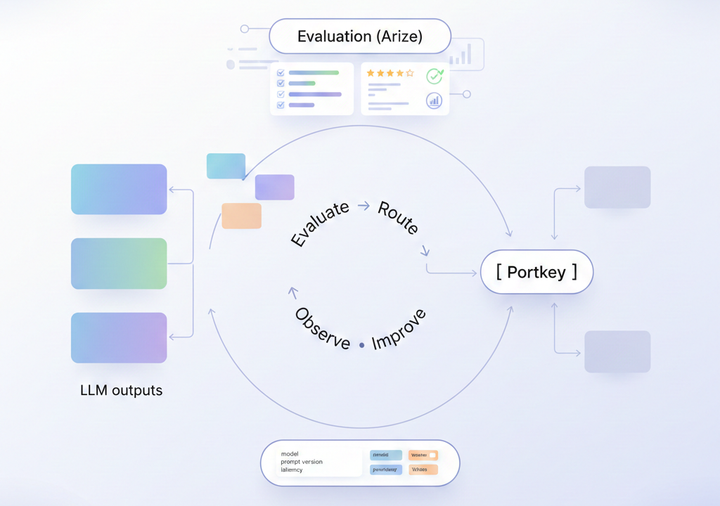

Production systems can change frequently. Prompts are iterated on, models are swapped, routing