Benefits of using MCP over traditional integration methods

Discover how the Model Context Protocol (MCP) enhances AI integration by enabling real-time data access, reducing computational overhead, and improving security.

Connecting AI to external data sources has always been a challenge. Traditional methods rely on pre-indexed databases, embeddings, or API-specific integrations, which slow things down, increase costs, and create security risks. The Model Context Protocol (MCP) changes that by providing real-time access to data without extra storage or complex connectors. Let’s break down why MCP is the smarter choice.

Always access the latest data

MCP retrieves data in real time instead of working with pre-cached or indexed datasets that quickly become outdated. This means AI systems always work with fresh information, reducing the risk of incorrect or stale responses.

Better security and compliance

Storing intermediary data increases exposure to breaches and compliance risks. MCP removes this issue by pulling data only when needed, without keeping unnecessary copies. This is especially useful for industries dealing with sensitive data, such as healthcare and finance, where regulatory compliance is a priority.

Lower computational overhead

Many AI systems use embeddings and vector databases to pre-process information. While effective, this requires significant resources. MCP reduces this burden by letting models request only the necessary data in real time, cutting down on computation costs and improving performance.

Scales without extra work

Traditional methods require custom-built connectors for different platforms, adding complexity. MCP uses a standard protocol that allows AI models to connect with various systems without extra development effort. This makes it easier to scale across multiple AI workflows.

Simplifies development and maintenance

With MCP, developers don’t need to maintain separate API connectors for every external system. This speeds up development and reduces maintenance headaches, as updates or changes to APIs won’t break integrations.

More adaptable AI with better context awareness

MCP helps AI models dynamically discover new data sources and adjust to changing environments. This allows AI systems to stay responsive to evolving needs without constant reconfiguration.

How MCP enables real-time AI interactions

MCP allows LLMs to interact with external databases, APIs, and file systems. It works by:

- Transforming queries: Converts natural language queries into structured formats like SQL for precise data retrieval.

- Retrieving live data: Pulls information directly from external systems instead of relying on preloaded datasets.

- Supporting two-way communication: Not only retrieves data but also triggers actions in external systems.

- Merging results: Integrates retrieved data with the model’s knowledge base for more relevant responses.

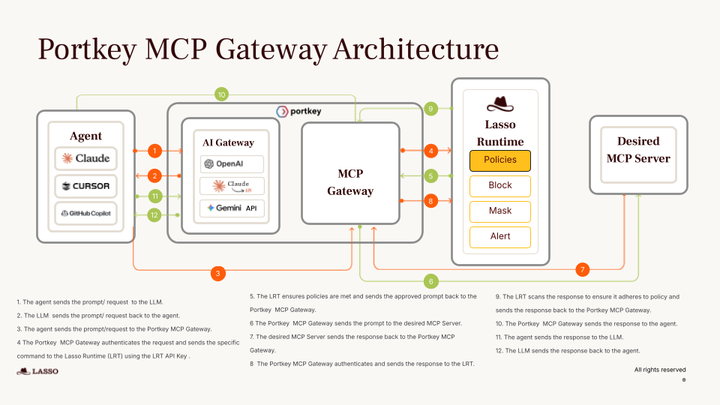

Portkey's MCP client simplifies the development of AI agents by providing seamless integration with over 800 tools. The platform enables AI models to connect with various data sources and APIs through straightforward prompts, eliminating the need for complex configurations.

This approach streamlines the development process, allowing for efficient and secure interactions with databases, APIs, and code execution environments.

Where MCP works best

MCP is useful in any application that relies on real-time insights, such as:

- Financial modeling – AI-driven forecasts based on the latest market data.

- IoT analytics – Instant decision-making based on live sensor readings.

- Cybersecurity – Dynamic threat detection and response.

Final thoughts

MCP isn’t just another way to connect AI with data—it solves real problems with outdated integration methods. By improving performance, security, and flexibility it makes AI systems more capable and easier to manage.

If your AI applications rely on external data, MCP can make them faster, more secure, and less hassle to maintain.