MCP vs Function Calling – How They Actually Work Together

Understand the real difference between MCP and function calling. Explore architecture, vendor lock-in issues, security tradeoffs, & when teams should adopt MCP.

When it comes to MCP vs function calling, the topic is often framed as if developers must choose one or the other. In real production architectures, that’s rarely the case. MCP and function calling solve different problems at different layers of the stack.

Function calling lets a model express what it wants to do by returning structured tool requests. Model Context Protocol (MCP) standardizes how those requests get executed across tools, services, and providers.

In reality, MCP and function calling complement each other.

If you want to know more, you’re in the right place. This post breaks down how the two fit together, how their architectures differ, and when to use function calling, MCP, or both in production AI systems.

What function calling and MCP actually do

The simplest way to understand MCP vs function calling is to view them as two phases of the same interaction.

Function calling is Phase 1, which is intent generation. When an LLM decides it needs external data or an action, it returns structured JSON specifying which function to call and the arguments required. The model itself never executes the function. Instead, the application reads the structured output, runs the corresponding code, and returns the result to the model.

MCP is Phase 2, which standardizes execution infrastructure. The Model Context Protocol is an open client-server standard built on JSON-RPC 2.0 that defines how tools are discovered, invoked, and managed across applications and LLM providers. Rather than embedding tool logic directly inside an app, MCP exposes capabilities through servers that clients can connect to.

MCP defines three core primitives: Tools, Resources, and Prompts. Tools represent executable actions, while Resources provide contextual data and Prompts define reusable interaction templates – two capabilities that have no direct equivalent in traditional function calling.

⭐ The key point is that MCP does not replace function calling. Function calling is how the model expresses what it wants to do. MCP is the infrastructure that makes those requests portable, discoverable, and executable across systems.

How the architecture differs under the hood

When it comes to how the code is structured, function calling keeps everything inside the application loop, while MCP separates tool execution into a client-server system.

💭 Note that some platforms and docs now use “tool calling” and “function calling” interchangeably. The mechanism is the same – the model emits a structured output that tells the application which tool to execute.

The function calling loop

With function calling, developers define tools in the application using JSON schemas (name, description, parameters). These schemas are sent to the model in API requests via the tools parameter. The model can then choose the most appropriate tool and return structured JSON arguments for the function call. The application reads that response, executes the function locally, and sends the result back into the conversation.

Tools do not need to be included in every request – developers can choose when to provide or restrict them.

Everything happens in one process: tool schemas, execution logic, credentials, and error handling all live inside the application. In simpler architectures, adding a new tool may require updating application code and redeploying it, but tools can also be loaded dynamically at runtime without redeployment, depending on the system design.

MCP’s client-server model

MCP separates tool definitions from the application entirely. Instead of embedding tools in the AI app, they run inside MCP servers that expose capabilities over a standardized protocol.

Three components participate in the architecture:

- MCP Host – the AI application (e.g., Claude Desktop, Claude Code, or VS Code).

- MCP Client – maintains a connection to an MCP server.

- MCP Server – a separate process exposing tools, resources, and prompts.

Here, the tool lives in its own process, independent from the AI application. The host connects through an MCP client and discovers tools dynamically.

That discovery mechanism is something traditional function calling doesn’t provide. MCP clients can call tools/list to see which capabilities are available at runtime. If a server adds or removes tools, it sends notifications/tools/list_changed, allowing clients to update automatically – no code changes required.

MCP also supports multiple transport layers. stdio is used for local servers with near-zero overhead, while Streamable HTTP enables remote servers with OAuth and network authentication. Both use the same JSON-RPC 2.0 message format, making tool execution consistent regardless of where the server runs.

This architectural separation is the core difference: function calling embeds tools directly inside an application loop, while MCP turns tools into network-addressable capabilities that any compatible AI client can discover and invoke.

The vendor lock-in problem across OpenAI, Anthropic, Gemini, and Llama

One of the biggest hidden costs of function calling is format fragmentation across LLM providers. Every major model vendor implements tool invocation slightly differently. On the surface, the capability looks the same – models request a tool with arguments – but the underlying JSON structure varies enough that switching providers requires real engineering work.

Four providers, four incompatible formats

Consider the same operation: an LLM requesting a get_stock_price function. The intent is identical across providers, but the response format differs.

Each provider requires different parsing logic, different response handling, and different dispatch code. A system written for OpenAI’s tool_calls structure won’t work with Anthropic’s tool_use format without rewriting the integration layer.

That means switching providers involves more than swapping an API key. Teams must adjust response parsing, update error handling, and re-test how tool calls are dispatched across their application.

How MCP eliminates format fragmentation

The Model Context Protocol addresses this problem at the execution layer. Instead of every provider defining its own tool invocation format, MCP standardizes how tools are discovered and executed through a single protocol.

In MCP, tools are invoked using the universal tools/call JSON-RPC method. Any MCP-compatible client can call any MCP server regardless of which LLM generated the request. If a team switches from OpenAI to Claude, the MCP servers and tools remain unchanged – the client simply points to the same servers.

This is why MCP is often described as the “USB-C for AI.” Just as USB-C standardized how devices connect to chargers and peripherals, MCP aims to standardize how AI systems connect to tools.

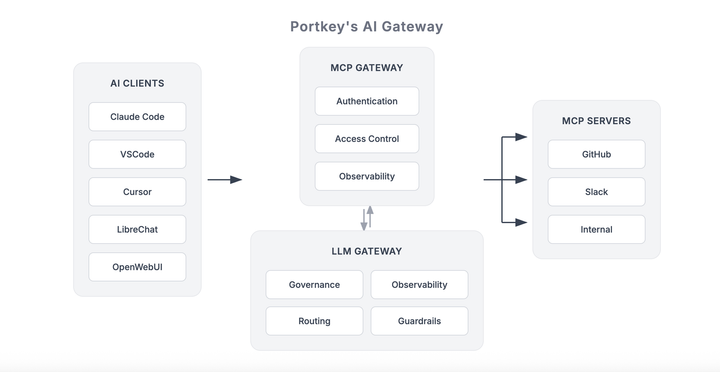

Protocol portability solves the integration problem, but operating MCP infrastructure at scale introduces its own challenges. For instance, Portkey’s MCP Gateway extends MCP’s portability with centralized governance, authentication, and observability across providers.

When MCP is worth the overhead

MCP introduces real architectural benefits, but it also introduces real complexity. For many projects, standard function calling remains the simplest and fastest option.

Function calling is usually the right approach when you have fewer than five tools, use a single LLM provider, and run everything inside one application. In these cases, the integration is lightweight: a tool schema, a dispatch function, and a loop that executes the request. A typical implementation can be written in 20–30 lines of Python, making it ideal for prototypes or early production systems.

MCP becomes worthwhile as systems scale. Once you have 10 or more tools, multiple teams consuming the same integrations, or a multi-provider architecture, embedding every tool inside application code becomes difficult to maintain. MCP moves those integrations into independent servers that can be reused across applications and models.

There is also a latency tradeoff. Function calling runs entirely in-process, so execution is fast. MCP adds either a process hop (for local stdio servers) or a network hop (for remote HTTP servers). For latency-sensitive, single-service applications, function calling will still be faster.

However, integration complexity tends to grow quadratically without MCP as tools and services multiply, while MCP keeps complexity closer to linear growth by centralizing tool infrastructure.

In practice, many production systems adopt a hybrid model: function calling handles the model’s tool request inside the conversation loop, while MCP manages routing and execution at the infrastructure layer.

Security, credentials, and governing MCP at scale

Security is one of the most meaningful architectural differences between traditional function calling systems and MCP-based systems. The key issue is where credentials live and how access is controlled.

How credential isolation actually works

In a typical function calling setup, all credentials live inside the application environment. The application process contains API keys, database credentials, and service tokens required by every tool. When the model requests a function, the application executes it directly using those credentials.

This architecture is simple, but it creates a broad security surface. If the application is compromised – through prompt injection, dependency vulnerabilities, or infrastructure access – the attacker potentially gains access to every connected system. The privilege scope is essentially all-or-nothing.

MCP changes that model by isolating credentials at the server level. Each MCP server runs as its own process and holds only the credentials required for the tools it exposes. The AI client never directly interacts with backend services – it only sends standardized tool invocation requests to the MCP server.

This separation reduces the blast radius of a compromise. If one MCP server is breached, only that server’s credentials are exposed, not the entire system.

However, MCP isn’t automatically secure. Security research has found that 43% of sampled MCP servers contained command injection vulnerabilities, and the mcp-remote command injection vulnerability (CVE-2025-6514) exposed more than 437,000 environments. At the same time, 51% of developers cite unauthorized or excessive API calls from AI agents as their top security concern.

In other words, MCP improves architectural isolation, but safe deployment still requires strong governance.

The governance gap at the organizational scale

As MCP adoption grows inside an organization, a new operational challenge emerges. What begins as two or three MCP servers can quickly expand to dozens of internal and third-party servers.

At that point, three problems tend to appear:

- Fragmented authentication: Some servers rely on OAuth, while internal ones may use Okta or Entra ID.

- Invisible tool usage: No centralized view of which agents are calling which tools.

- Unmanaged server sprawl: Untracked MCP servers appearing across teams, essentially the MCP version of “shadow AI”.

MCP supports authorization at the server boundary, where each invocation can be authenticated, logged, and audited. However, those controls operate on a per-server basis, not across the entire organization.

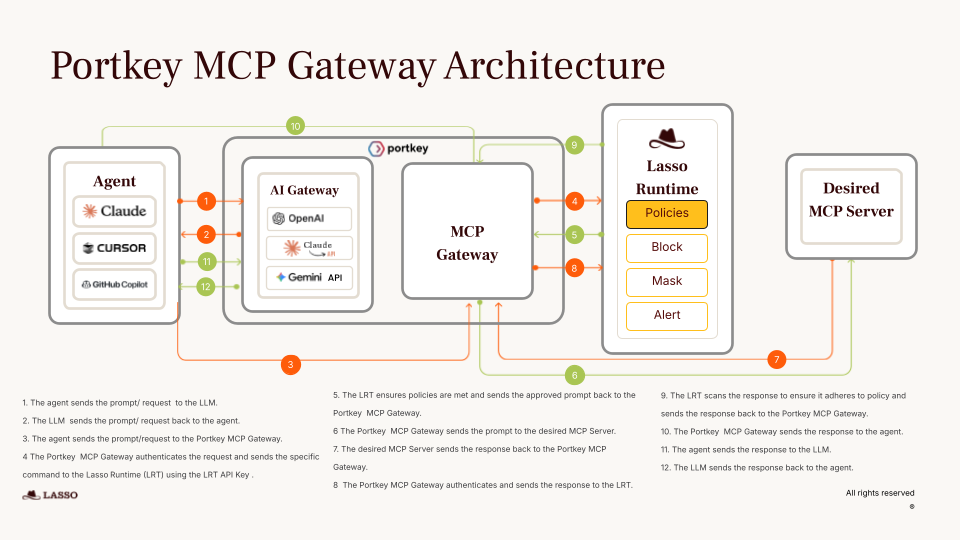

This is where a governance layer becomes necessary. Portkey’s MCP Gateway acts as a centralized control plane for MCP environments, providing unified authentication where credentials never reach individual agents, per-team RBAC, audit logs that correlate tool invocations with LLM activity, and approved-server enforcement to prevent unapproved MCP servers from being accessed.

Choosing function calling, MCP, or both

For simple systems, function calling alone is enough – a few tools, one model provider, and a single application managing execution.

As systems grow, MCP becomes the better architectural foundation. It separates tools from applications, enables multi-provider architectures, and lets multiple teams reuse the same integrations.

Still, most mature AI systems end up combining both MCP and function calling.

If your MCP ecosystem is starting to scale and governance becomes critical, don’t wait any longer. Explore Portkey’s MCP Gateway to centralize authentication, enforce RBAC, and gain full visibility into tool usage across your AI stack!

Ship Faster with AI

Everything you need to build, deploy, and scale AI applications

Read next

MCP vs RAG Compared for Production Teams

Securing the MCP Gateway: Lasso Partners with Portkey to Deliver Enterprise-Grade Agentic AI Protection