Why connecting OTel traces with LLM logs is critical for agent workflows

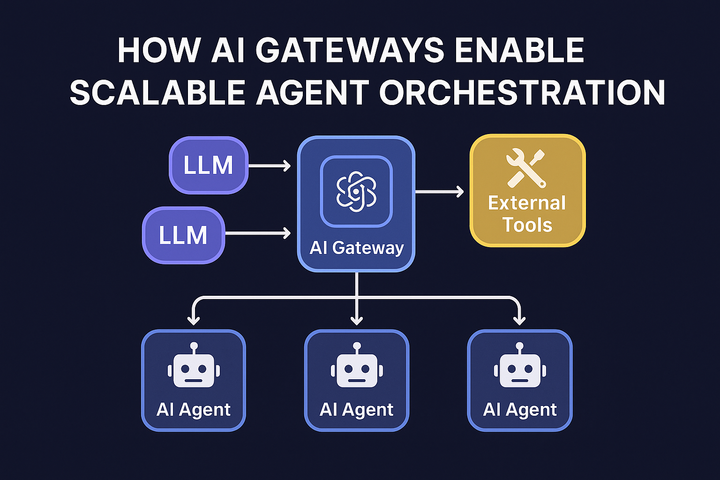

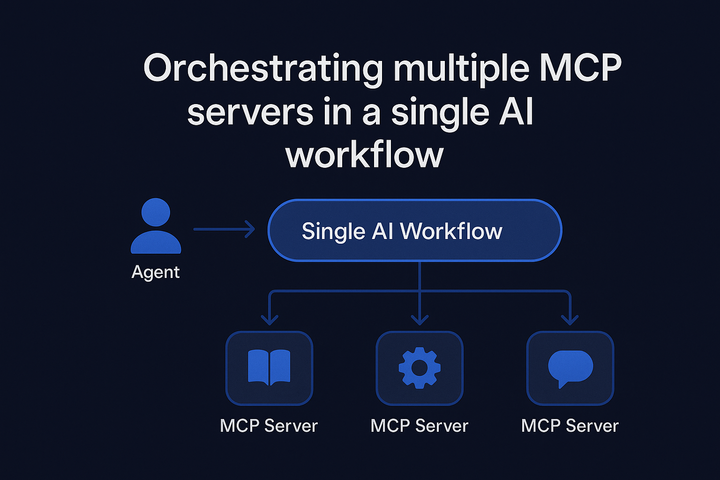

Disconnected logs create blind spots in agent workflows. See how combining OTel traces with LLM logs delivers end-to-end visibility for debugging, governance, and cost tracking.