Everything We Know About Claude Code Limits

Last updated - March 2026

TL;DR

- For Claude.ai Users:

The core limit is a 5-hour rolling session that begins with your first prompt. Weekly quotas (introduced August 28, 2025) now apply to heavy users on Claude Pro and Claude Max plans. All Claude.ai plans share a common usage bucket across the Claude app and Claude Code; Max plans multiply the allowance accordingly. Max subscribers can also purchase additional usage at standard API rates once they hit limits. - For API Users:

API usage is separate and billed pay-as-you-go per token. The latest models, Opus 4.6 and Sonnet 4.6, are priced at $5/$25 and $3/$15 per million tokens respectively. You get the same rates whether you use Anthropic, AWS Bedrock, Google Vertex AI, or Microsoft Foundry.

You can choose whichever region, VPC, or IAM model best fits your stack. - For Portkey Users:

With Portkey, you can route Claude Code through any supported provider i.e., Anthropic, Bedrock, Vertex, Foundry, and others, from a single endpoint, pulling in maximum capacity from multiple sources simultaneously. Portkey also lets you centralize cost controls, add security guardrails, configure automatic failover, and get audit logs automatically.

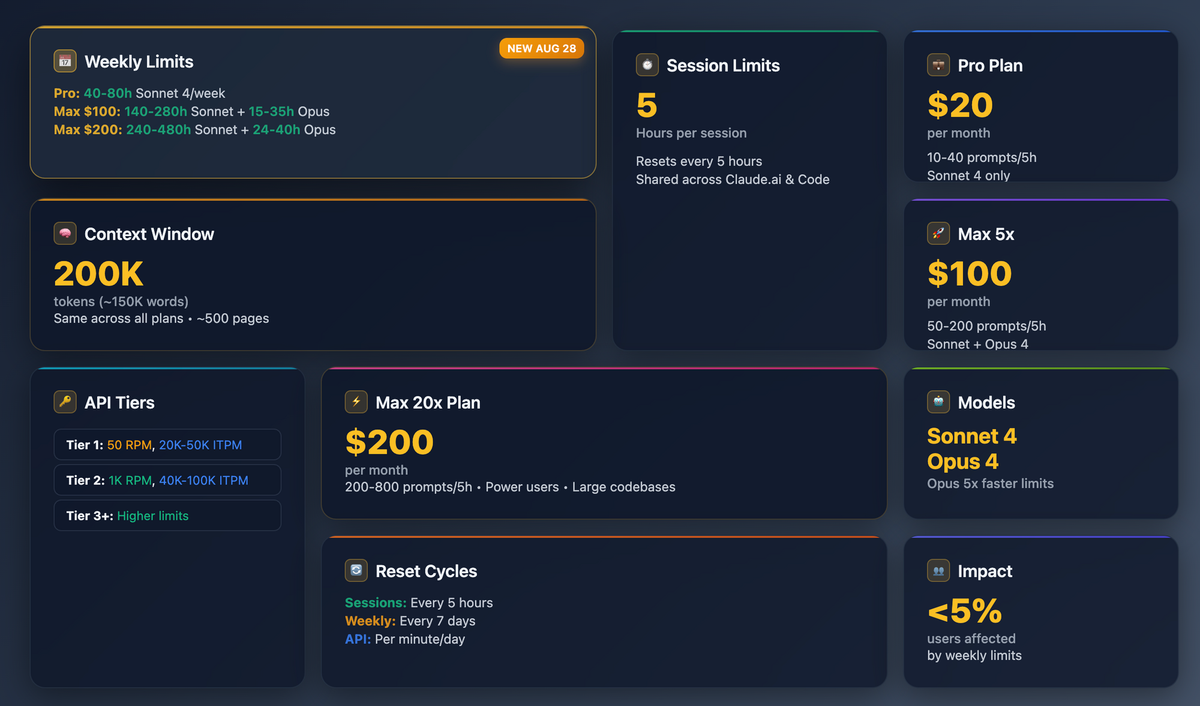

Understanding the 5‑Hour session model

The moment you run claude in your terminal, a 5‑hour rolling window begins. All messages and token spend during that period draw from your plan’s pool; the clock resets only when you send the next message after the 5 hours lapse. Pro users average 10‑40 prompts per window, while Max 20× users can push 200‑800 prompts depending on code size and model choice. (Source: Anthropic Help Center)

💡 Tips

- Time your first prompt so the window spans your peak coding block; fire a dummy ping at 13:01 to start a fresh window for the afternoon.

- Batch edits: one long diff request burns fewer tokens than several “please refine” follow‑ups.

- Use

/compactin Claude Code to manage context costs and/modelto switch models based on task complexity.

Weekly Limits (Live Since August 28, 2025)

In addition to the 5-hour rolling windows, Anthropic now enforces weekly rate limits that reset every seven days. These include an overall usage cap plus a separate weekly cap for Opus models. Anthropic says the limits affect fewer than 5% of subscribers based on usage patterns.

Here's what to expect per week:

| Plan | Weekly Sonnet hours | Weekly Opus hours |

|---|---|---|

| Pro ($20/mo) | 40-80 | -- |

| Max 5x ($100/mo) | 140-280 | 15-35 |

| Max 20x ($200/mo) | 240-480 | 24-40 |

Usage varies based on codebase size, model complexity, and session behavior. Max subscribers can purchase additional usage beyond the weekly limit at standard API rates.

Plan pricing & capacities

| Plan | Monthly fee | Relative capacity | Typical Claude Code prompts / 5 h | Model access |

|---|---|---|---|---|

| Free | $0 | ~1/10 Pro | 2-5 (Sonnet 4.6 only) | Sonnet 4.6 |

| Pro | $20 | baseline | 10-40 | Sonnet 4.6 |

| Max 5x | $100 | 5x Pro | 50-200 | Sonnet 4.6 + Opus 4.6 |

| Max 20x | $200 | 20x Pro | 200-800 | Sonnet 4.6 + Opus 4.6 |

| Team (Standard) | $25/user | -- | -- | Sonnet 4.6 |

| Team (Premium) | $150/user | -- | Includes Claude Code | Sonnet 4.6 + Opus 4.6 |

Pro plans are also available at $17/month when billed annually. Team plans require a minimum of 5 users.

Token‑Based API Pricing (Console, Bedrock, Vertex)

| Model | Anthropic API | AWS Bedrock | Google Vertex AI | Microsoft Foundry |

|---|---|---|---|---|

| Opus 4.6 | $5 in / $25 out per M tokens | same list price | same list price | same list price |

| Sonnet 4.6 | $3 in / $15 out | same | same | same |

Previous generation (still available)

| Model | Anthropic API | AWS Bedrock | Google Vertex AI | Microsoft Foundry |

|---|---|---|---|---|

| Opus 4.5 | $5 in / $25 out | same | same | same |

| Sonnet 4.5 | $3 in / $15 out | same | same | same |

| Haiku 4.5 | $1 in / $5 out | same | same | same |

Legacy models

| Model | Pricing |

|---|---|

| Opus 4 / 4.1 | $15 in / $75 out per M tokens |

| Sonnet 4 / 3.7 | $3 in / $15 out |

Key pricing notes:

- Long context premium: When using the 1M token context window (beta) and exceeding 200K input tokens, rates increase to $10/$37.50 (Opus 4.6) and $6/$22.50 (Sonnet 4.6) per M tokens.

- Fast mode (Opus 4.6): 6x standard rates for significantly faster output. Currently in research preview.

- Prompt caching: Cache reads cost just 10% of base input price. Cache writes are 1.25x (5-min TTL) or 2x (1-hour TTL).

- Batch API: 50% discount on all models for non-urgent workloads.

- US-only inference: 1.1x multiplier on all token costs for Opus 4.6 and newer models.

- Bedrock, Vertex, and Foundry bill in your account's currency and add standard cloud charges (data egress, networking, etc.). Anthropic confirms pricing parity across channels. Bedrock and Vertex offer global and regional endpoints, with regional endpoints including a 10% premium.

Which endpoint should you use?

| Need | Best option | Why |

|---|---|---|

| Lowest latency to US-East | AWS Bedrock (us-east-1) | Dedicated Anthropic capacity inside AWS infra |

| Private networking / VPC-SC | Vertex AI Private Service Connect | Keeps traffic off the public internet |

| Azure-native billing and governance | Microsoft Foundry | MACC-eligible, Entra ID auth, Azure Monitor integration |

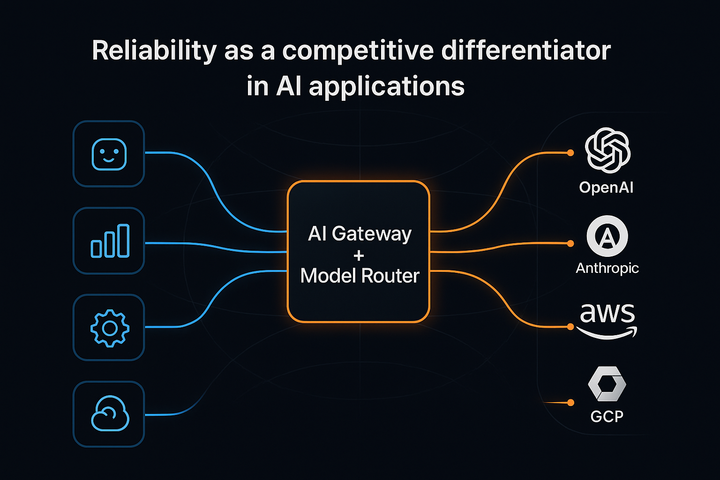

| Global multi-region failover | Portkey Gateway | Routes across Anthropic, Bedrock, Vertex, Foundry, and 250+ LLMs from a single endpoint |

| Advanced cost controls | Portkey budgets + prompt caching | Enforce spend limits, auto-downgrade models, per-team attribution |

Portkey: One Switch for Anthropic, Bedrock, or Vertex

Direct Claude Code is great for solo hacking, but enterprises need:

- Unified gateway routing – point Claude Code to Portkey once; switch between Anthropic, Bedrock, Vertex, Foundry, or any other provider by changing a config value.

- Live spend & usage dashboards – 40‑plus metrics, cost attribution by team, and budget alerts.

- RBAC + guardrails – per‑role rate limits, banned‑pattern filters, and partner guardrails from Palo Alto, Prompt Security, Pangea, etc.

- Audit‑ready logs – every prompt, response, and error logged with metadata for compliance.

Bottom line: Portkey turns Claude Code into an enterprise‑grade service without forcing you to rebuild scripts or choose a single cloud. Drop your API key(s) into Portkey, set a config, and your devs keep typing claude …—now with observability, governance, and cloud‑agnostic resilience baked in.

If you're looking to implement Claude Code for your organisation with governance at scale, book a demo with us for a detailed walkthrough, or try it yourself!

Anthropic may refine quotas again once weekly limits ship, and Bedrock/Vertex regions keep expanding. Bookmark this post—we’ll update as soon as the numbers move.