Moving Fast Has a Security Bill and It Just Came Due

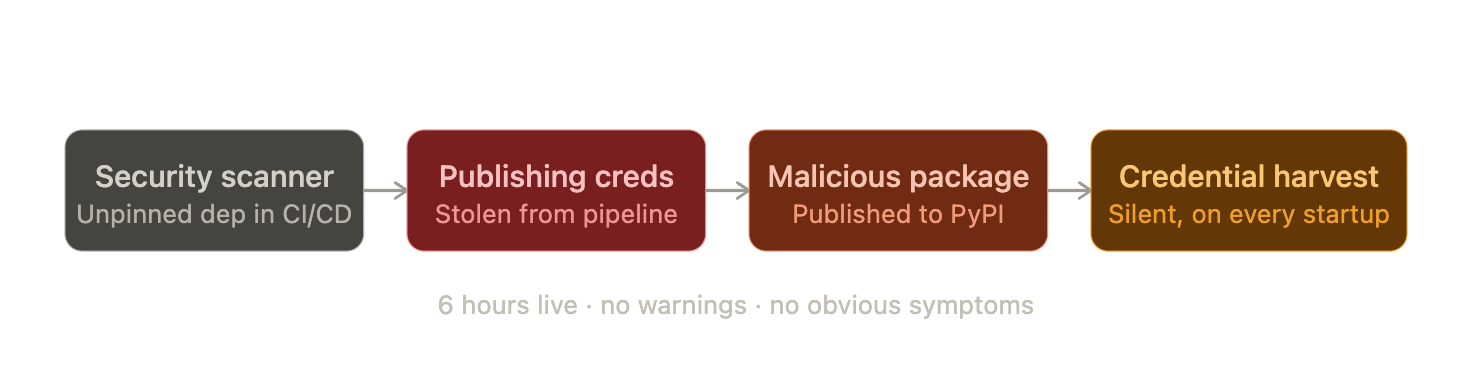

Recently, a coordinated supply chain attack hit an open-source LLM gateway affecting millions of monthly users, present in a third of all cloud environments. The malicious versions were live for roughly six hours. No warning. No obvious symptoms. Just a silent credential harvest running in the background every time Python started.

Karpathy called it "software horror" and the blast radius he listed made clear why: cloud credentials, SSH keys, Kubernetes configs, database passwords, all gone from a single pip install.

The Slack threads were grim. Engineers checking their environments, rotating keys, auditing CI/CD logs. Some found they were fine. Others weren't. The panic spread well beyond the teams that were actually hit – companies with no exposure spent days auditing anyway, because nobody was sure what "no exposure" even meant yet.

We're not going to talk about the incident itself, there's already excellent coverage of exactly what happened and how to check if you were affected. What we want to talk about is what it revealed about how the AI ecosystem has been building, and what needs to change.

Why LLM Gateways Are the Perfect Target

LLM gateways occupy a uniquely sensitive position in your stack. By design, they're the routing layer between your applications and every LLM provider you use, which means they routinely touch API keys for every provider, environment variables across dev, staging, and production, database credentials that agents depend on, and Kubernetes configs in containerized deployments.

Compromising a gateway doesn't require breaching any of those providers directly. One package compromise and you get the keys to everything, all at once.

The attackers didn't target the gateway directly either. They started one layer upstream, in a security scanning tool that the gateway's own CI/CD pipeline trusted and ran without version pinning. That single unmanaged dependency handed over publishing credentials. One chain of trust, exploited end to end.

The techniques weren't sophisticated. They worked because of habits the AI ecosystem has collectively normalized.

The Habit That Made This Possible

Here's what we see happen constantly: someone spins up a new agent, pulls in a gateway library, drops API keys into a shared environment. No formal review. Nobody calls it production access. But by the time it's doing anything useful, that's exactly what it is.

That's not carelessness. That's just what building in a fast-moving space looks like when the operational maturity layer hasn't caught up yet. And to be clear – this is a pattern we've had to correct in our own house too. Nobody in this ecosystem started with perfect hygiene.

Attackers knew this. They didn't guess where credentials would be. They scanned the exact locations every developer puts them by default:

~/.env

~/.aws/credentials

~/.kube/config

~/.ssh/id_*

shell history

CI/CD configsEvery hit. Not because they were clever. Because the AI ecosystem spent two years normalizing exactly this setup.

The teams that came through cleanest weren't smarter. They had just made a few deliberate architectural decisions, mostly before they needed to, about where credentials live and how they're managed.

What You Should Actually Be Doing

None of what follows is new. These are standard practices in any mature infrastructure stack. The AI ecosystem just decided, collectively and without much discussion, that they didn't apply yet. This incident was a useful forcing function for everyone, us included.

Keep provider keys off developer machines

The real fix isn't a secrets manager, it's making sure raw provider credentials never reach developer machines in the first place. An AI gateway manages provider keys centrally in encrypted storage, so developers work with scoped virtual keys that carry their own budget and rate limits. A compromise means revoking a scoped reference, not rotating every provider key across your entire stack. AWS Secrets Manager, GCP Secret Manager, and Vault all help too, but the gateway is where this problem gets solved at the right layer.

Pin your dependencies, and be honest about what that means

Most teams think they're doing this. Most teams aren't. There are three levels, and they are not equivalent.

Level 1: Version pinning

The thing most people mean when they say "we pin our dependencies." Better than nothing:

pip install some-gateway-library # pulls whatever is newest

pip install some-gateway-library==x.y.z # safer, but not safe enoughLevel 2: Lockfiles

What actually protected teams when this hit:

uv sync --frozen # installs from lockfile, ignores what's on PyPI right nowpoetry.lock and uv.lock store the exact resolved dependency tree with cryptographic hashes. When the registry served a compromised package, a lockfile said this doesn't match what I expect and the install failed. That's the whole story for teams running lockfiles. They were fine.

Simon Willison, writing on the day of the attack, argued for dependency cooldowns: only pull new package versions after they've been in the wild for several days. Sound idea. But cooldowns don't change what happens after a bad version lands. The real variable that determined blast radius wasn't how fast teams installed. It was whether credentials were scoped and revocable when something got through.

Level 3: Hash verification

The ceiling:

# requirements.txt

some-gateway-library==x.y.z \

--hash=sha256:abc123...Also audit your transitive dependencies. A lot of teams were exposed not through a direct install but because a framework they used pulled in the compromised package underneath. Run pip-audit regularly.

One more thing: .pth files. Attackers dropped a malicious .pth file into site-packages. Python processes every .pth file at interpreter startup, before your code runs, before you import anything. Malicious code ran on every Python process on affected machines, every time Python started.

find $(python -c "import site; print(' '.join(site.getsitepackages()))") \

-name "*.pth" -exec grep -l "import\|exec\|eval" {} \;A .pth file containing code execution logic is a red flag.

Scope CI/CD secrets to the step that needs them

The publishing credential stolen in the attack was available to the entire pipeline, including the dependency installation step. This is a configuration decision that takes ten minutes to fix and almost nobody has fixed it:

# Wrong - secret available to every step, including pip install:

env:

PUBLISH_TOKEN: ${{ secrets.REGISTRY_TOKEN }}

jobs:

build:

steps:

- name: Install deps # token is in scope here. shouldn't be.

run: pip install -r requirements.txt

- name: Publish

run: publish --token $PUBLISH_TOKEN

# Right - secret only exists in the step that needs it:

jobs:

publish:

steps:

- name: Publish

env:

PUBLISH_TOKEN: ${{ secrets.REGISTRY_TOKEN }}

run: publish --token $PUBLISH_TOKENBetter still: use OIDC Trusted Publishing where your registry supports it. GitHub mints a short-lived token per run that expires when the job ends. Nothing to steal.

The same logic applies to GitHub Actions versions. Tags can be overwritten. In this attack, threat actors force-pushed malicious code to trusted version tags across 75+ popular Actions repositories. Pin to commit SHAs.

uses: some-org/[email protected] # this tag can be overwritten

uses: some-org/some-action@a1b2c3d4e5f6... # this SHA cannotZizmor can audit your GitHub Actions workflows for this automatically.

When you rotate, rotate everything

The upstream maintainers knew about a prior compromise five days before the attack went public. They rotated credentials, but not atomically. Some were rotated, some weren't. Attackers used the window between rotations to generate new valid tokens before the old ones were fully revoked.

Partial rotation is not rotation. It's the feeling of having done something without the outcome. If you can't rotate everything simultaneously, treat it as if you haven't rotated anything yet. Prioritize your highest-privilege credentials and move through the rest as fast as possible.

Know what to watch for if something is already running

The .pth attack had a bug: it spawned a child process on every interpreter startup, creating a fork loop. Sudden, extreme RAM usage whenever Python ran. If someone on your team reports their machine froze after a pip install, that is not a dependency conflict.

For CI/CD: a pip install step should only be calling package registry CDN domains. POST requests with large encrypted payloads, or DNS lookups for domains that look like slightly-off versions of legitimate tooling infrastructure, are both worth stopping the pipeline for.

kubectl get pods -n kube-system | grep -v "known-system-pod"

find ~/.config/systemd/user/ -name "*.service" -newer ~/.bashrcHow Portkey Is Built

We've thought carefully about where credentials should live in an AI stack. Not because we anticipated this specific attack, but because these are the right defaults for any infrastructure that touches production credentials. We're continuously hardening our own systems — this incident was a reminder that critical infrastructure demands ongoing vigilance, not a one-time effort.

Provider keys never touch developer machines

Provider API keys live in encrypted storage. Developers work with scoped virtual keys with their own budget limits, rate limits, and model-level controls. The actual provider credential was never on the developer's machine to begin with. In a compromise scenario, that's the decision that determines your blast radius.

API key rotation, built in

New secret issued before the old one is revoked, with a controlled transition window so both are valid simultaneously. No gap for an attacker to exploit. Triggered on demand or on a schedule, and every rotation is audit-logged.

Full request logs as a tripwire

By default, every request is logged with timestamp, model, token count, cost, and status. Unexpected spend is often the first signal of a compromise. Most teams don't have visibility into that signal until it's too late.

Open-source core

Audit it. Run it yourself. Trust in infrastructure tooling has to be earned, and the way you earn it is by letting people see exactly what you've built.

The Bigger Picture

This isn't about a sophisticated attack. It's about an ecosystem that moved faster than the operational maturity layer around it, and what happens when that gap gets found by someone looking for it. It's a good reminder that AI infrastructure is now critical infrastructure — and everyone building in this space, including us, needs to keep strengthening their processes accordingly.

The controls that address this predate AI entirely. The teams that came through with a contained incident weren't doing anything exotic. They had just made deliberate decisions early about where credentials live, how dependencies are managed, and what the blast radius looks like when something goes wrong. Those decisions held.

That's the shift worth making, not as a security project, but as part of building AI systems with the same care you'd bring to any other piece of infrastructure that touches real credentials in real environments.

Further reading: LiteLLM security advisory, Snyk technical deep-dive, Comet incident post-mortem, GitGuardian response guide, Full malware detonation analysis