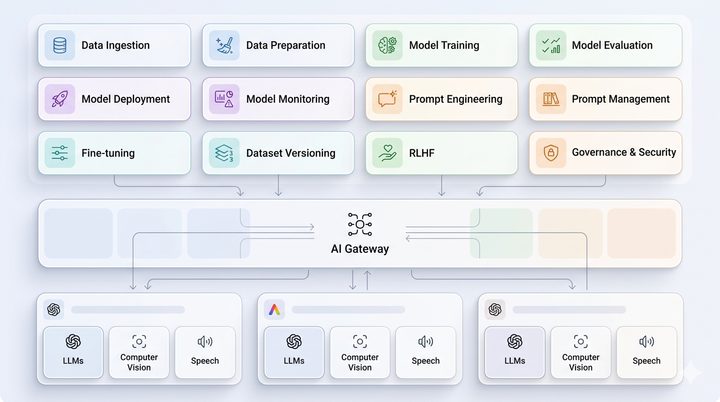

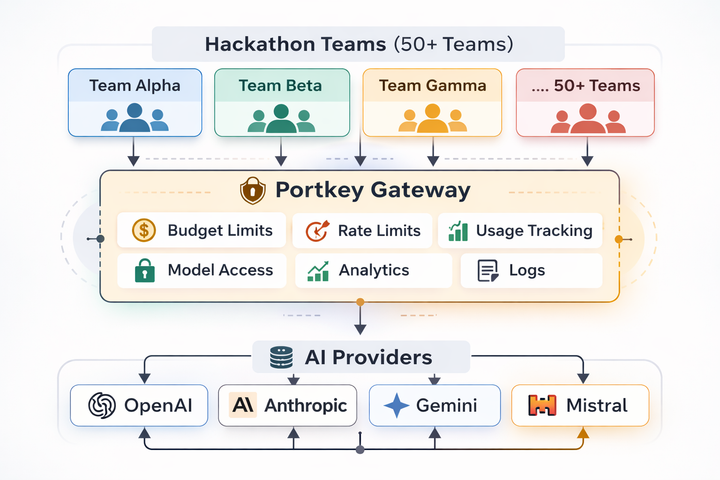

The Gateway Grew Up

So did the problems it was built to solve.

There's a point where something stops being a side project and becomes infrastructure. The thing you were "just trying out" is now what your business runs on. The question shifts from can we build with AI? to