Explore the top LLM gateway platforms for production AI. Compare capabilities, understand trade-offs, and find the right solution for your team.

What is an LLM Gateway?

An LLM gateway is a centralized proxy and control layer that sits between your application and large language model providers. It gives teams a unified interface to route requests, manage credentials, enforce policies, and observe model behavior, across any provider or model.

Core functions of an AI gateway

Unified API access

A single endpoint that abstracts provider-specific APIs, letting teams switch or combine models without rewriting application code

Centralized governance and access control

Provides a single platform to handle API keys, authentication, model updates, and environment configurations. Manages which teams, users, and applications can access which models, with budgets, rate limits, and audit logs.

Performance optimization

Uses caching, batching, and parallel processing to improve latency and reduce compute and token costs.

Security and compliance

Enforces strong data-protection controls, PII masking, and content moderation to meet organizational and regulatory requirements.

Monitoring and observability

Tracks usage, latency, token counts, and costs while logging every request for debugging and auditability.

Why enterprise teams need a gateway layer

Calling an LLM API directly works fine in a prototype. Scaling that to production is where things break down.

As teams move beyond single-provider experiments, they quickly find themselves managing inconsistent APIs, rotating credentials, debugging silent failures, and trying to attribute rising costs, with no centralized layer to help.

The production challenges

Multi-provider complexity

Each LLM provider has its own API format, error structure, and rate limit behavior. Building reliable orchestration across them from scratch is brittle and expensive to maintain.

Limited visibility

Without centralized logs and metrics, teams can’t trace errors, measure latency, or monitor token spend.

Key sprawl and security risk

Keys are shared across users or environments, creating compliance and access issues.

Inconsistent reliability

Provider downtimes, quota limits, and slow responses disrupt workflows and customer experience.

Governance blind spots:

No unified way to enforce content policies, moderate outputs, or track who’s calling which model.

Leading LLM Gateway Platforms in 2026

Platform | Focus Area | Key Features | Ideal For |

|---|---|---|---|

Portkey | End-to-end production control plane. Open source. | Unified API for 1,600+ LLMs, observability, guardrails, governance, prompt management, MCP integration | GenAI builders and enterprises scaling to production |

OpenRouter | Developer-first multi-model routing | Unified API for multiple LLMs, simple routing, community model access | Individual developers and small teams experimenting with multiple models |

LiteLLM | Open-source LLM proxy | OpenAI-compatible proxy supporting 1600+ LLMs, OSS extensibility | Platform teams running multi-provider LLM routing with self-managed infrastructure |

Vercel AI Gateway | Frontend integration & caching | Route AI requests from frontend apps, built-in caching, model proxy via Edge Functions | Web developers integrating AI features in client apps |

TrueFoundry Gateway | MLOps workflow management | Model deployment, serving, and monitoring; integrates with internal ML pipelines | ML and platform engineering teams |

Kong AI Gateway | API gateway with AI routing extensions | API lifecycle management, rate limiting, policy enforcement via plugins | Enterprises already using Kong for APIs |

Solo.io Gloo Gateway | Cloud-native API gateway | Envoy-based routing, service mesh integrations, AI traffic policies | Cloud-native teams and service mesh users |

F5 AI Gateway | Enterprise-grade traffic control | Load balancing, security filtering, integration with F5 BIG-IP | Large enterprises with existing F5 infrastructure |

IBM AI Gateway | AI governance and enterprise compliance | Integrates with watsonx, supports enterprise IAM and auditing | Regulated industries (finance, healthcare) |

Azure AI Gateway | Managed Microsoft ecosystem solution | Routing, telemetry, Azure OpenAI and model catalog integration | Enterprises fully on Azure stack |

Cequence AI gateway | API security platform, extending the security layer to AI and LLM endpoints | API defense, traffic inspection, and threat prevention | Security teams and enterprises that need strong API protection and threat prevention. |

Custom Gateway Solutions | In-house or OSS-built layers | Fully customizable; requires ongoing maintenance and scaling effort | Advanced engineering teams needing full control |

In-Depth analysis of the leading LLM Gateway platforms

Dive deeper into each solution, covering their core strengths, weaknesses, pricing, customer base, and market reputation, to help teams choose the right gateway for their GenAI production stack.

Portkey

Portkey is an AI Gateway and production control plane built specifically for GenAI workloads. It provides a single interface to connect, observe, and govern requests across 1,600+ LLMs. Portkey extends the gateway with observability, guardrails, governance, and prompt management, enabling teams to deploy AI safely and at scale.

Strengths

Unified API layer

Primarily designed for production teams, so lightweight prototypes may find it more advanced than needed.

Enterprise governance features (e.g., policy-as-code, regional data residency) are part of higher-tier plans.

Deep observability

Detailed logs, latency metrics, token and cost analytics by app, team, or model.

Guardrails and moderation

Request and response filters, jailbreak detection, PII redaction, and policy-based enforcement.

Governance and access control

Workspaces, roles, data residency, audit trails, and SSO/SCIM integration.

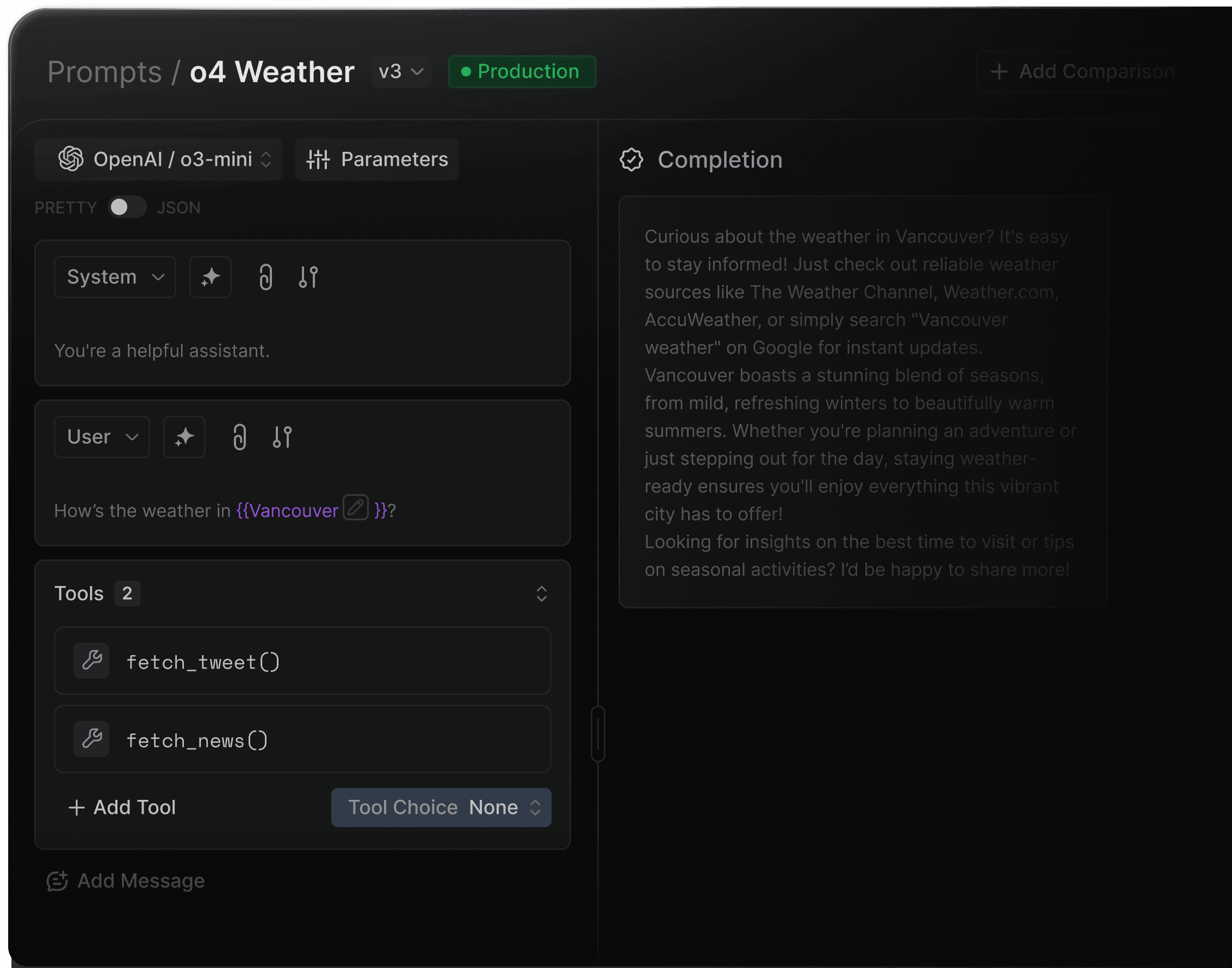

Prompt management and versioning

Reusable templates, variable substitution, and environment promotion.

Multi-model routing and reliability controls

Latency- or cost-based routing, fallbacks, canary deployments, and circuit breakers.

MCP integration

Register tools and servers with built-in observability and governance for agent use cases.

Developer experience

Modern SDKs (Python, JS, TS), streaming support, evaluation hooks, and test harnesses.

Limitations

Primarily designed for production teams, so lightweight prototypes may find it more advanced than needed.

Enterprise governance features (e.g., policy-as-code, regional data residency) are part of higher-tier plans.

Pricing Structure

Portkey offers a usage-based pricing model with a free tier for initial development and testing. Enterprise plans include advanced governance, audit logging, and dedicated regional deployments.

Ideal For / Typical Users

GenAI builders, enterprise AI teams, and platform engineers running multi-provider LLM workloads who need observability, compliance, and reliability in production.

4.8/5 on G2 — praised for its developer-friendly SDKs, observability depth, and governance flexibility.

LLM Gateway

LLMGateway.io is an open-source, developer-first LLM gateway that provides a unified API for 210+ models across 25+ providers.It has richer analytics and self-hosting support, it targets developers who want multi-provider access with real-time cost and usage tracking without the overhead of a full enterprise platform.

Strengths

Broad model and provider coverage

Access to 210+ models across 25+ providers including OpenAI, Anthropic, Google, AWS Bedrock, Azure, xAI, DeepSeek, Groq, Mistral, Alibaba Cloud, and more.

Bring Your Own Keys (BYOK)

Use your own provider API keys at no markup. Optionally use LLMGateway's managed keys with a 5% platform fee on credit usage.

Real-time cost and analytics dashboard

Track requests, tokens, costs, and latency with per-model and per-provider breakdowns over 7 or 30-day windows.

Lightweight setup

Free tier with no credit card required and 30-second setup — low barrier to starting.

Limitations

Observability is analytics-focused rather than deep tracing or request-level debugging with full logs.

Minimal governance and access controls, which makes it difficult to operate as an internal platform across large teams or in regulated environments.

No native support for prompt versioning, templating, environment promotion, or approval workflows.

No enterprise governance features such as team workspaces, RBAC, policy enforcement, or audit logs on standard plans.

Pricing Structure

Free tier included with no credit card required. 5% platform fee on credit usage. Enterprise plans available with custom SLAs, dedicated shards, and volume discounts.

Ideal For / Typical Users

Individual developers and small teams who need simple, reliable access to multiple LLM providers with real-time cost visibility, and who want the flexibility to self-host or use managed cloud without locking into a heavyweight platform.

LiteLLM

LiteLLM is an open-source, self-hosted AI gateway that provides an OpenAI-compatible interface for routing requests across multiple LLM providers. It is commonly deployed as an internal gateway layer that teams run and operate themselves.

Strengths

Unified access to LLM providers:

A single API and pricing layer that enables fast model switching and easy provider comparison during experimentation.

Self-hosted and open source:

Full control over deployment, networking, and data flow.

Extensible architecture:

Can be integrated with custom logging, auth, or policy layers.

Strong community adoption:

Widely used as a lightweight internal gateway.

Limitations

Requires teams to manage infrastructure, scaling, and availability themselves.

Observability is basic by default; advanced token analytics, tracing, and cost attribution require additional tooling.

No native enterprise governance such as RBAC, workspaces, budgets, or audit logs.

No native support for prompt versioning, templating, environment promotion, or approval workflows.

Operational complexity increases significantly as usage scales across teams.

Pricing Structure

Free, open-source self-hosting with paid enterprise plans for hosted and advanced capabilities.

Ideal For / Typical Users

Teams routing across multiple LLM providers while managing the rest of the infrastructure in-house, especially platform teams comfortable operating self-hosted gateways.

Public marketplace reviews are limited. LiteLLM is widely discussed and adopted in open-source and developer communities.

TrueFoundry

TrueFoundry is an MLOps platform that helps teams deploy, monitor, and manage machine learning models and LLM-based applications.

Its AI Gateway component is part of a broader MLOps suite, focused on infrastructure automation, model deployment, and workflow management rather than pure multi-provider orchestration.

The gateway is positioned for teams that already have ML pipelines and need a controlled way to serve LLMs within those workflows.

Strengths

End-to-end MLOps integration:

Tightly connected with model deployment, experiment tracking, and model registry features.

Strong internal infrastructure controls:

Autoscaling, rollout strategies, and Kubernetes-native deployments for teams that prefer infrastructure ownership.

Custom model hosting:

Supports deploying fine-tuned or proprietary LLMs alongside hosted providers.

Monitoring and alerts:

Metrics for model performance, resource usage, and API health within the same interface.

Developer workflows:

CI/CD pipelines, environment promotions, and reproducible deployment templates.

Limitations

Primarily built for ML/MLOps teams, not general application developers.

Less focus on cross-provider LLM routing compared to modern AI gateways.

Lacks granular guardrails, policy governance, and cost controls expected in enterprise AI gateways.

Requires infrastructure management (Kubernetes, cloud resources), which may add overhead for teams wanting a hosted solution.

Observability is more ML-focused rather than request-level LLM observability.

Pricing Structure

No broad public free tier; pricing is generally provided on request.

Ideal For / Typical Users

ML platform teams, data science groups, and enterprises building custom model pipelines who want model hosting and serving integrated with their DevOps/MLOps workflows.

Appreciated for its deployment automation and developer workflows; some users note a steeper learning curve around infrastructure setup.

Kong

Kong is a widely adopted API gateway and service connectivity platform used by engineering teams to manage API traffic at scale. Its AI Gateway capabilities are delivered through plugins and extensions built on top of Kong Gateway and Kong Mesh.

Strengths

Robust API management foundation:

Industry-standard rate limiting, authentication, transformations, and traffic policies.

Plugin ecosystem:

AI-related plugins support routing to LLM providers, applying policies, and transforming requests.

Security controls:

Integrates with WAFs, OAuth providers, RBAC, and audit frameworks.

Environment flexibility:

Deployable as OSS, enterprise self-hosted, or cloud-managed via Kong Konnect.

Limitations

AI capabilities are extensions, not core design — lacks deep observability and cost intelligence for LLM workloads

Limited multi-provider LLM orchestration, routing logic, or model-aware optimizations (latency-based routing, canaries, cost-aware fallback).

No built-in LLM guardrails, jailbreak detection, evaluations, or policy enforcement beyond generic API rules.

Requires configuration and DevOps involvement; not optimized for rapid GenAI iteration.

Governance and monitoring are API-centric, not request/token-centric like modern AI gateways.

Pricing Structure

AI gateway is a part of their API gateway offering.

Ideal For / Typical Users

Enterprises already using Kong as their API gateway or service mesh who want to extend existing infrastructure to support basic LLM routing, without adding a new platform.

Praised for reliability and API governance; not typically evaluated for AI-specific workloads.

Maxim AI — Bifrost

Bifrost is the LLM gateway product from Maxim AI, positioned as the fastest enterprise AI gateway available. It is open-source under Apache 2.0 and integrates natively with Maxim's broader evaluation and observability platform.

Strengths

Low Latency:

Adds only ~20 microseconds of latency and uses less memory than comparable gateways.

Open Source:

Available under Apache 2.0 with an active community, CLI-first setup, and drop-in compatibility with OpenAI, Anthropic, LiteLLM, Google GenAI, LangChain, and more

Multi-provider support:

Access to 8+ providers and a wide model selection through a unified interface, including support for custom deployed models.

Reliability:

Provider fallback and failover to maintain high availability, with virtual key management for access control per use case.

Limitations

Provider coverage is limited to around 8 providers out of the box, compared to broader alternatives.

Observability dashboard is basic compared to dedicated platforms; deep analytics rely on the broader Maxim product.

No native support for prompt versioning, templating, environment promotion, or approval workflows.

Limited integration ecosystem compared to more established gateways; fewer out-of-the-box connectors to frameworks and observability tools.

Pricing Structure

Open-source with a free tier. Enterprise plans with a 14-day free trial are available on request.

Ideal For / Typical Users

Performance-sensitive development teams and engineers who need maximum throughput with minimal overhead, and teams already using Maxim for AI evaluation who want a unified gateway and observability stack.

Helicone

Helicone is an open-source LLM observability platform and AI gateway, built with a "one-line integration" approach. It provides detailed request logging, prompt management, caching, rate limiting, and fallbacks across 100+ models through an OpenAI-compatible gateway.

Strengths

Minimal setup

Change one line of code and all requests are automatically logged.

Deep request-level observability

Every request is logged with latency, token counts, cost, and metadata. Sessions, user analytics, custom properties, and HQL (query language) for filtering and segmenting logs.

Prompt management

Version and manage prompts directly in the platform with a playground for testing, dataset curation, and scoring.

Open source

Self-hostable with a transparent codebase; 5,200+ GitHub stars

Limitations

Governance, RBAC, workspaces, and enterprise access controls are limited compared to purpose-built enterprise gatewaysNo built-in LLM observability, token analytics, cost tracking, or evaluation capabilities.

No native guardrails such as PII redaction, jailbreak detection, or content moderation.

Data retention is capped at 7 days on the free plan and 1 month on Pro; unlimited retention requires Team or Enterprise plans.

Not ideal for rapid GenAI iteration or multi-provider experimentation.

Pricing Structure

Free tier with 10,000 requests/month. Pro at $79/month, Team at $799/month. Enterprise pricing on request. Usage-based pricing applies above the free tier on paid plans.

Ideal For / Typical Users

Individual developers, small AI teams, and early-stage companies who want deep request logging and prompt observability with minimal integration effort and a low-friction free tier.

Custom Gateway Solutions

Some engineering teams choose to build their own AI gateway or proxy layer using open-source components, internal microservices, or cloud primitives.

These “custom gateways” often start as simple proxies for OpenAI or Anthropic calls and gradually evolve into internal platforms handling routing, logging, and key management.

While they offer full control, they require significant ongoing engineering, security, and maintenance investment to keep up with the rapidly expanding LLM ecosystem.

Strengths

Full customization:

Every component can be tailored to internal needs.

Complete control over data flow:

Easy to enforce organization-specific data policies or network isolation.

Deep integration with internal stacks:

Fits seamlessly into proprietary systems, legacy infrastructure, or internal developer platforms.

Potentially lower cost at a very small scale:

If only supporting a single provider or simple routing logic.

Limitations

High engineering burden: implementing and maintaining routing, retries, fallbacks, logging, key rotation, credentials, dashboards, and guardrails is complex.

Difficult to keep up with fast-moving LLM features—function calling, new provider APIs, model updates, safety settings, embeddings, multimodal inputs, etc.

No built-in observability: requires building token tracking, latency metrics, per-user analytics, cost dashboards, and log pipelines.

Security and governance debt: RBAC, workspace separation, policy enforcement, audit logs, and compliance controls require significant effort.

Reliability challenges: implementing circuit breakers, provider failover, shadow testing, canary routing, and load balancing is nontrivial.

Higher opportunity cost — engineers spend time maintaining infrastructure rather than building AI products.

Pricing Structure

No fixed pricing — the cost is measured in engineering hours, cloud resources, and operational overhead. Over time, most teams report that maintaining custom gateways costs more than adopting a purpose-built platform, especially once governance, observability, and multi-provider support become requirements.

Ideal For / Typical Users

Highly specialized engineering teams with unique compliance or architectural constraints that cannot be met by commercial platforms, and who have the bandwidth to maintain internal infrastructure.

Key capabilities of LLM Gateways

Unified Endpoint

Connect to any LLM via a single API.

Multi-Model Routing

Dynamically route requests to OpenAI, Anthropic, Mistral, etc.

Caching & Rate Limiting

Optimize latency and cost.

Key Management

Securely store and rotate provider keys.

Observability:

Track token usage, latency, and performance.

Governance & Guardrails:

Enforce content policies and access control.

Why Portkey is different

Governance at scale

Built for enterprise control from day one

Workspaces and role-based access

Budgets, rate limits, and quotas

Data residency controls

SSO, SCIM, audit logs

Policy-as-code for consistent enforcement

HIPAA

COMPLIANT

GDPR

Complete visibility into every request

Token and cost analytics

Latency traces

Transformed logs for debugging

Workspace, team, and model-level insights

Error clustering and performance trends

Unified SDKs and APIs

A single interface for 1,600+ LLMs and embeddings across OpenAI, Anthropic, Mistral, Gemini, Cohere, Bedrock, Azure, and local deployments.

Guardrails and safety

PII redaction

Jailbreak detection

Toxicity and safety filters

Request and response policy checks

Moderation pipelines for agentic workflows

Prompt and context management

Template versioning, variable substitution, environment promotion, and approval flows to maintain clean, reproducible prompt pipelines.

Portkey unifies everything teams need to build, scale, and govern GenAI systems — with the reliability and control that production demands

Reliability automation

Sophisticated failover and

routing built into the gateway:

Fallbacks and retries

Canary and A/B routing

Latency and cost-based selection

Provider health checks

Circuit breakers and dynamic throttling

Architecture overview

Portkey sits at the center of the GenAI stack as the control plane that every model call and tool interaction passes through. When an application, agent, or backend service sends a request, it first reaches Portkey’s gateway layer. This is where authentication, access rules, key management, routing logic, caching, rate limits, guardrails, and reliability controls are applied. Instead of each application implementing its own logic, Portkey standardizes this behavior across the entire organization.

From the gateway, the request is routed to the appropriate model provider or internal model. Portkey supports the full ecosystem — OpenAI, Anthropic, Mistral, Google Gemini, Cohere, Hugging Face, Bedrock, Azure OpenAI, Ollama, and custom enterprise-hosted LLMs. Provider differences, credentials, model versions, and health checks are all abstracted behind a single consistent interface.

Portkey also routes MCP tool calls. MCP servers are registered and discovered through Portkey, allowing agents to call external tools with the same governance, access controls, and observability as model requests.

Tool executions, context loads, errors, and policies are all enforced within the same control plane. Every interaction—whether a model call or a tool invocation—is captured by Portkey’s observability layer. Logs, transformed traces, latency breakdowns, token and cost analytics, error patterns, and replay data flow into a unified telemetry stream. This gives teams a real-time understanding of system behavior, performance, and spend without instrumenting anything manually.

All of this rolls up into Portkey’s governance layer, where organizations manage workspaces, roles, budgets, rate limits, data residency, audit trails, and policy-as-code. This is where compliance and operational controls are defined and consistently enforced across teams and applications.

Together, these layers form a single architecture: applications send requests, Portkey governs and routes them, providers execute them, and all telemetry flows back into a governed control plane. It replaces the fragmented mix of proxies, scripts, dashboards, and ad-hoc control mechanisms with one unified platform built for production-grade GenAI.

Use Cases

Portkey is designed to support a wide range of GenAI applications, from early prototypes evolving into production systems to large-scale enterprise deployments.

Teams building AI copilots, agents, and workflows use Portkey as the backbone that keeps every model call and tool invocation reliable, governed, and traceable as projects grow from prototype to production.

As applications span multiple models, providers, and tools, Portkey ensures consistent behavior across all workflows without requiring developers to rewrite internal logic or maintain provider-specific complexity.

Enterprise AI teams use Portkey to centralize access to models and tools, replacing scattered provider keys, credentials, and policies with a single control plane that enforces budgets, quotas, permissions, and compliance rules.

Organizations with many teams and departments rely on Portkey to standardize AI access, making onboarding easier and ensuring that usage, safety, and governance requirements are applied uniformly.

Product and platform engineering teams use Portkey to move from experimentation to stable production, with clear visibility into latency, costs, token usage, and model behavior—without building internal dashboards or handling vendor fragmentation.

Companies using both hosted and internally deployed models use Portkey to unify provider and self-hosted LLM access behind the same gateway, making routing, governance, and observability consistent across all inference sources.

Integrations

Portkey connects to the full GenAI ecosystem through a unified control plane. Every integration works through the same consistent gateway. This gives teams one place to manage routing, governance, cost controls, and observability across their entire AI stack.

Portkey supports integrations with all major LLM providers, including OpenAI, Anthropic, Mistral, Google Gemini, Cohere, Hugging Face, AWS Bedrock, Azure OpenAI, and many more. These connections cover text, vision, embeddings, streaming, and function calling, and extend to open-source and locally hosted models.

Beyond models, Portkey integrates directly with the major cloud AI platforms. Teams running on AWS, Azure, or Google Cloud can route requests to managed model endpoints, regional deployments, private VPC environments, or enterprise-hosted LLMs—all behind the same Portkey endpoint.

Integrations with systems like Palo Alto Networks Prisma AIRS, Patronus, and other content-safety and compliance engines allow organizations to enforce redaction, filtering, jailbreak detection, and safety policies directly at the gateway level. These controls apply consistently across every model, provider, app, and tool.

Frameworks such as LangChain, LangGraph, CrewAI, OpenAI Agents SDK, etc. route all of their model calls and tool interactions through Portkey, ensuring agents inherit the same routing, guardrails, governance, retries, and cost controls as core applications.

Portkey integrates with vector stores and retrieval infrastructure, including platforms like Pinecone, Weaviate, Chroma, LanceDB, etc. This allows teams to unify their retrieval pipelines with the same policy and governance layer used for LLM calls, simplifying both RAG and hybrid search flows.

Tools such as Claude Code, Cursor, LibreChat, and OpenWebUI can send inference requests through Portkey, giving organizations full visibility into token usage, latency, cost, and user activity, even when these apps run on local machines.

For teams needing deep visibility, Portkey integrates with monitoring and tracing systems like Arize Phoenix, FutureAGI, Pydantic Logfire and more. These systems ingest Portkey’s standardized telemetry, allowing organizations to correlate model performance with application behavior.

Finally, Portkey connects with all major MCP clients, including Claude Desktop, Claude Code, Cursor, VS Code extensions, and any MCP-capable IDE or agent runtime.

Across all of these categories, Portkey acts as the unifying operational layer. It replaces a fragmented integration landscape with a single, governed, observable, and reliable control plane for the entire GenAI ecosystem.

Get started

Portkey gives teams a single control plane to build, scale, and govern GenAI applications in production with multi-provider support, built-in safety and governance, and end-to-end visibility from day one.