Enterprise-grade AI Gateway

Enterprise-grade AI Gateway

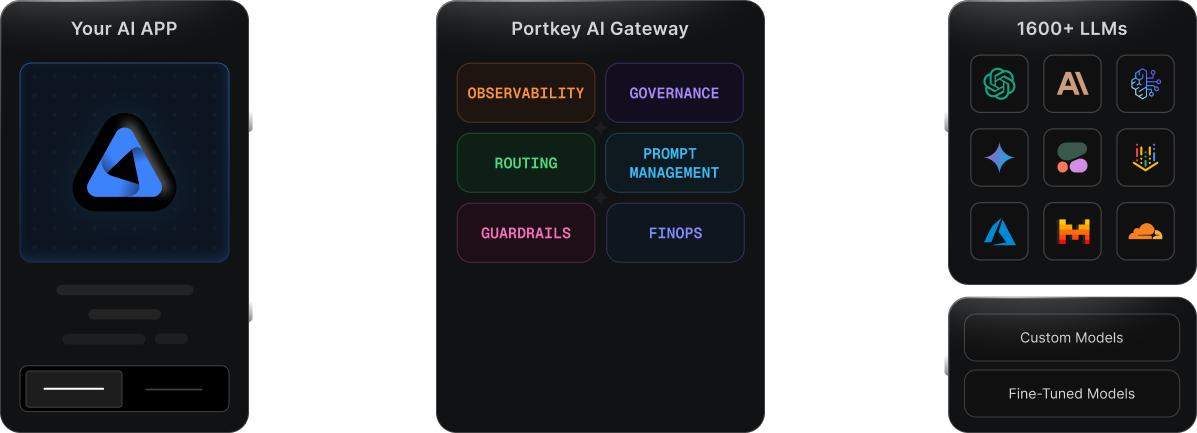

Connect, manage, and secure AI interactions across 3000+ LLMs with centralized control, real-time monitoring, and enterprise-grade governance.

npx @portkey-ai/gateway

npx @portkey-ai/gateway

npx @portkey-ai/gateway

Powering 3000+ GenAI teams

Powering 3000+ GenAI teams

Powering 3000+ GenAI teams

Lightning-fast,

battle-tested AI Gateway

Secure and reliable AI gateway with consistently fast responses, worldwide.

Access any model via a unified API

Connect to 1600+ LLMs and providers across different modalities via the AI gateway - no separate integration required

Ensure reliability and uptime with smart routing

Dynamically switch between models, distribute workloads, and ensure failover with configurable rules.

Optimize costs with caching

Reduce latency and save costs with simple and semantic caching for repeat requests

Collaborative libraries, templates, and intelligent organizations

help teams craft better prompts.

Secure and simplify key management

Store LLM keys safely in Portkey’s vault and manage access with virtual keys. Rotate, revoke, and monitor usage with full control

Advanced capabilities, built-in

Handle large volumes with smart batching

Scale workloads efficiently using provider batch APIs or custom batching, without impacting real-time performance.

Tailor LLM responses with fine-tuning

Improve output quality using provider-specific fine-tuning, all through unified API.

Advanced capabilities, built-in

Handle large volumes with smart batching

Scale workloads efficiently using provider batch APIs or custom batching, without impacting real-time performance.

Tailor LLM responses with fine-tuning

Improve output quality using provider-specific fine-tuning, all through unified API.

Advanced capabilities, built-in

Handle large volumes with smart batching

Scale workloads efficiently using provider batch APIs or custom batching, without impacting real-time performance.

Tailor LLM responses with fine-tuning

Improve output quality using provider-specific fine-tuning, all through unified API.

Driving real impact for production AI

requests processed/month

Trusted by AI teams worldwide to handle workloads at scale.

uptime

Battle-tested infrastructure built for reliability, even at scale.

cost efficiency

intelligent caching, routing strategies and batching requests,

Driving real impact for production AI

Requests Processed

Trusted by AI teams worldwide to handle workloads at scale.

uptime

Battle-tested infrastructure built for reliability, even at scale.

Latency

Lightning-fast responses - built for real-time AI experiences.

Driving real impact for production AI

requests processed/month

Trusted by AI teams worldwide to handle workloads at scale.

uptime

Battle-tested infrastructure built for reliability, even at scale.

cost efficiency

intelligent caching, routing strategies and batching requests,

Go from pilot to production faster

Enterprise-ready AI Gateway for reliable, secure, and fast deployment

Conditional Routing

Route to providers as per custom conditions.

Multimodal by design

Supports vision, audio, and image generation providers and models.

Fallbacks

Switch between LLMs during failures or errors.

Automatic retries

Rescue your failed requests with auto-retries

Load balancing

Use the AI gateway to distribute network traffic across LLMs.

OpenAI real-time API

Our AI gateway records real-time API requests, including cost and guardrail violations.

Canary testing

Test new models and prompts without causing impact.

Request timeouts

Terminate a request to handle errors or send a new request.

Files

Upload files to the AI gateway and reference the content in your requests.

Go from pilot to production faster

Enterprise-ready AI Gateway for reliable, secure, and fast deployment

Conditional Routing

Capture every request and trace its complete journey. Export logs to your reporting tools

Multimodal by design

Supports vision, audio, and image generation providers and models.

Fallbacks

Switch between LLMs during failures or errors.

Automatic retries

Rescue your failed requests with auto-retries

Load balancing

Use the AI gateway to distribute network traffic across LLMs.

OpenAI real-time API

Our AI gateway records real-time API requests, including cost and guardrail violations.

Canary testing

Test new models and prompts without causing impact.

Request timeouts

Terminate a request to handle errors or send a new request.

Files

Upload files to the AI gateway and reference the content in your requests.

Go from pilot to production faster

Enterprise-ready AI Gateway for reliable, secure, and fast deployment

Conditional Routing

Capture every request and trace its complete journey. Export logs to your reporting tools

Multimodal by design

Supports vision, audio, and image generation providers and models.

Fallbacks

Switch between LLMs during failures or errors.

Automatic retries

Rescue your failed requests with auto-retries

Load balancing

Use the AI gateway to distribute network traffic across LLMs.

OpenAI real-time API

Our AI gateway records real-time API requests, including cost and guardrail violations.

Canary testing

Test new models and prompts without causing impact.

Request timeouts

Terminate a request to handle errors or send a new request.

Files

Upload files to the AI gateway and reference the content in your requests.

Trusted by Fortune 500s & Startups

Portkey is easy to set up, and the ability for developers to integrate with LLMs is great. Overall, it has significantly sped up our development process.

Patrick L,

Founder and CPO, QA.tech

With 30 million policies a month, managing over 25 GenAI use cases became a pain. Portkey helped with prompt management, tracking costs per use case, and ensuring our keys were used correctly. It gave us the visibility we needed into our AI operations.

Prateek Jogani,

CTO, Qoala

Portkey stood out among AI Gateways we evaluated for several reasons: excellent, dedicated support even during the proof of concept phase, easy-to-use APIs that reduce time spent adapting code for different models, and detailed observability features that give deep insights into traces, errors, and caching

AI Leader,

Fortune 500 Pharma Company

Portkey is a no-brainer for anyone using AI in their GitHub workflows. It has saved us thousands of dollars by caching tests that don't require reruns, all while maintaining a robust testing and merge platform. This prevents merging PRs that could degrade production performance. Portkey is the best caching solution for our needs.

Kiran Prasad,

Senior ML Engineer, Ario

Well done on creating such an easy-to-use and navigate product. It’s much better than other tools we’ve tried, and we saw immediate value after signing up. Having all LLMs in one place and detailed logs has made a huge difference. The logs give us clear insights into latency and help us identify issues much faster. Whether it's model downtime or unexpected outputs, we can now pinpoint the problem and address it immediately. This level of visibility and efficiency has been a game-changer for our operations.

Oras Al-Kubaisi,

CTO, Figg

Used by GenAI teams across the world!

Trusted by Fortune 500s

& Startups

Portkey is easy to set up, and the ability for developers to integrate with LLMs is great. Overall, it has significantly sped up our development process.

Patrick L,

Founder and CPO, QA.tech

With 30 million policies a month, managing over 25 GenAI use cases became a pain. Portkey helped with prompt management, tracking costs per use case, and ensuring our keys were used correctly. It gave us the visibility we needed into our AI operations.

Prateek Jogani,

CTO, Qoala

Portkey stood out among AI Gateways we evaluated for several reasons: excellent, dedicated support even during the proof of concept phase, easy-to-use APIs that reduce time spent adapting code for different models, and detailed observability features that give deep insights into traces, errors, and caching

AI Leader,

Fortune 500 Pharma Company

Portkey is a no-brainer for anyone using AI in their GitHub workflows. It has saved us thousands of dollars by caching tests that don't require reruns, all while maintaining a robust testing and merge platform. This prevents merging PRs that could degrade production performance. Portkey is the best caching solution for our needs.

Kiran Prasad,

Senior ML Engineer, Ario

Well done on creating such an easy-to-use and navigate product. It’s much better than other tools we’ve tried, and we saw immediate value after signing up. Having all LLMs in one place and detailed logs has made a huge difference. The logs give us clear insights into latency and help us identify issues much faster. Whether it's model downtime or unexpected outputs, we can now pinpoint the problem and address it immediately. This level of visibility and efficiency has been a game-changer for our operations.

Oras Al-Kubaisi,

CTO, Figg

Used by GenAI teams across the world!

Trusted by Fortune 500s & Startups

Portkey is easy to set up, and the ability for developers to integrate with LLMs is great. Overall, it has significantly sped up our development process.

Patrick L,

Founder and CPO, QA.tech

With 30 million policies a month, managing over 25 GenAI use cases became a pain. Portkey helped with prompt management, tracking costs per use case, and ensuring our keys were used correctly. It gave us the visibility we needed into our AI operations.

Prateek Jogani,

CTO, Qoala

Portkey stood out among AI Gateways we evaluated for several reasons: excellent, dedicated support even during the proof of concept phase, easy-to-use APIs that reduce time spent adapting code for different models, and detailed observability features that give deep insights into traces, errors, and caching

AI Leader,

Fortune 500 Pharma Company

Portkey is a no-brainer for anyone using AI in their GitHub workflows. It has saved us thousands of dollars by caching tests that don't require reruns, all while maintaining a robust testing and merge platform. This prevents merging PRs that could degrade production performance. Portkey is the best caching solution for our needs.

Kiran Prasad,

Senior ML Engineer, Ario

Well done on creating such an easy-to-use and navigate product. It’s much better than other tools we’ve tried, and we saw immediate value after signing up. Having all LLMs in one place and detailed logs has made a huge difference. The logs give us clear insights into latency and help us identify issues much faster. Whether it's model downtime or unexpected outputs, we can now pinpoint the problem and address it immediately. This level of visibility and efficiency has been a game-changer for our operations.

Oras Al-Kubaisi,

CTO, Figg

Used by GenAI teams across the world!

Latest guides and resources

Why Portkey is the right AI Gateway for you

Discover why Portkey's purpose-built AI Gateway fulfills the unique demands...

Beyond the Hype: The Enterprise AI Blueprint You Need Now...

The Gen AI wave isn't just approaching—it's already crashed over every industry...

LLMs in Prod 2025: Insights from 2 Trillion+ Tokens

Real-world analysis of 2Trillion+ production tokens across 90+ regions on Portkey's...

Latest guides and resources

Why Portkey is the right AI Gateway for you

Discover why Portkey's purpose-built AI Gateway fulfills the unique demands...

Beyond the Hype: The Enterprise AI Blueprint You Need Now...

The Gen AI wave isn't just approaching—it's already crashed over every industry...

LLMs in Prod 2025: Insights from 2 Trillion+ Tokens

Real-world analysis of 2Trillion+ production tokens across 90+ regions on Portkey's...

Latest guides and resources

Why Portkey is the right AI Gateway for you

Discover why Portkey's purpose-built AI Gateway fulfills the unique demands...

Beyond the Hype: The Enterprise AI Blueprint You Need Now...

The Gen AI wave isn't just approaching—it's already crashed over every industry...

LLMs in Prod 2025: Insights from 2 Trillion+ Tokens

Real-world analysis of 2Trillion+ production tokens across 90+ regions on Portkey's...

Frequently Asked Questions

Will Portkey increase the latency of my API requests?

What happens if a provider fails or hits a rate limit?

Will Portkey scale if my app explodes?

Do you support SSO?

Can we control which models or providers are allowed?

Start building your AI apps with Portkey today

Everything you need to prototype, test, and scale AI workflows - Fast

Start building your AI apps with Portkey today

Everything you need to prototype, test, and scale AI workflows - Fast

Start building your AI apps with Portkey today

Everything you need to prototype, test, and scale AI workflows - Fast

Products

© 2025 Portkey, Inc. All rights reserved

HIPAA

COMPLIANT

Products

© 2025 Portkey, Inc. All rights reserved

HIPAA

COMPLIANT

Products

© 2025 Portkey, Inc. All rights reserved

HIPAA

COMPLIANT