Production stack

for Gen AI builders

Production stack for Gen AI builders

Production stack for Gen AI builders

Production stack

for Gen AI builders

Portkey equips AI teams with everything they need to go to production—Gateway, Observability, Guardrails, Governance, and Prompt Management, all in one platform.

Portkey packs in AI Gateway, Observability, Guardrails, Governance,

and Prompt Management features that have helped 100s of AI teams go

to production with confidence.

Portkey packs in AI Gateway, Observability, Guardrails, Governance,

and Prompt Management features that have helped 100s of AI teams go

to production with confidence.

Observability

Logs

Virtual Keys

Guardrails

Prompt

Portkey is the most performant and secure gateway that integrates with 250+ AI models, giving you full control, visibility, and protection for your generative AI apps in production.

Observability

Logs

Virtual Keys

Guardrails

Prompt

Portkey is the most performant and secure gateway that integrates with 250+ AI models, giving you full control, visibility, and protection for your generative AI apps in production.

Observability

Portkey is the most performant and secure gateway that integrates with 250+ AI models, giving you full control, visibility, and protection for your generative AI apps in production.

Observability

Logs

Virtual Keys

Guardrails

Prompt

Portkey is the most performant and secure gateway that integrates with 250+ AI models, giving you full control, visibility, and protection for your generative AI apps in production.

Trusted by 650+ AI Teams

tokens processed

daily

tokens processed

daily

github stars and counting

github stars and counting

LLMs supported

LLMs supported

"Portkey is a no-brainer for anyone using AI in their GitHub workflows. The reason is simple: the risk-reward balance is clear. Portkey has saved us thousands of dollars by caching tests that would otherwise run repeatedly without any changes

Kiran P

Sr. Machine Learning Eng. Ario

Why Portkey ♥

Caching

Clear ROI

Easy Set-Up

Figg

Haptik

Qoala

"Portkey is a no-brainer for anyone using AI in their GitHub workflows. The reason is simple: the risk-reward balance is clear. Portkey has saved us thousands of dollars by caching tests that would otherwise run repeatedly without any changes

Kiran P

Sr. Machine Learning Eng. Ario

Why Portkey ♥

Caching

Clear ROI

Easy Set-Up

Figg

Haptik

Qoala

"Portkey is a no-brainer for anyone using AI in their GitHub workflows. The reason is simple: the risk-reward balance is clear. Portkey has saved us thousands of dollars by caching tests that would otherwise run repeatedly without any changes

Kiran P

Sr. Machine Learning Eng. Ario

"Portkey is a no-brainer for anyone using AI in their GitHub workflows. The reason is simple: the risk-reward balance is clear. Portkey has saved us thousands of dollars by caching tests that would otherwise run repeatedly without any changes

Kiran P

Sr. Machine Learning Eng. Ario

Unified Access

Introducing the AI Gateway Pattern

Introducing the AI Gateway Pattern

Introducing the AI Gateway Pattern

Build.Monitor.Scale.

End-to-end LLM Orchestration

End-to-end LLM Orchestration

End-to-end LLM Orchestration

Build.Monitor.Scale.

Make your AI initiatives secure, reliable, and cost-efficient

Make your AI initiatives secure, reliable, and cost-efficient

Stop wasting time integrating models

Portkey lets you access 250+ LLMs via a unified API, so you can focus on building, not managing.

Eliminate the guesswork

Keep AI outputs in check

No need to hard-code prompts

Production-ready agent workflows

Stop wasting time integrating models

Portkey lets you access 250+ LLMs via a unified API, so you can focus on building, not managing.

Eliminate the guesswork

Keep AI outputs in check

No need to hard-code prompts

Production-ready agent workflows

Stop wasting time integrating models

Portkey lets you access 250+ LLMs via a unified API, so you can focus on building, not managing.

Eliminate the guesswork

Keep AI outputs in check

No need to hard-code prompts

Production-ready agent workflows

Stop wasting time integrating models

Portkey lets you access 250+ LLMs via a unified API, so you can focus on building, not managing.

Eliminate the guesswork

Keep AI outputs in check

No need to hard-code prompts

Production-ready agent workflows

We are heavy users of Portkey. They have thought about the needs of a company like ours and fit so well. Kudos on a great job done!

Abhishek Gutgutia - CTO, Adamx.ai

Plug & Play

Integrate in a minute

Integrate in a minute

Integrate in a minute

Integrate Portkey in just 3 lines of code—no changes to your existing stack.

Integrate Portkey in just 3 lines of code—no changes to your existing stack.

Integrate Portkey in just 3 lines of code—no changes to your existing stack.

Node.js

Python

OpenAI JS

OpenAI Py

cURL

import Portkey from 'portkey-ai'; const portkey = new Portkey() const chat = await portkey.chat.completions.create({ messages: [{ role: 'user', content: 'Say this is a test' }], model: 'gpt-4, }); console.log(chat.choices);

Node.js

Python

OpenAI JS

OpenAI Py

cURL

import Portkey from 'portkey-ai'; const portkey = new Portkey() const chat = await portkey.chat.completions.create({ messages: [{ role: 'user', content: 'Say this is a test' }], model: 'gpt-4, }); console.log(chat.choices);

// Paste a code snippet import { motion } from "framer-motion"; function Component() { return ( <motion.div transition={{ ease: "linear" }} animate={{ rotate: 360, scale: 2 }} /> ); }

Node.js

Python

OpenAI JS

OpenAI Py

cURL

import Portkey from 'portkey-ai'; const portkey = new Portkey() const chat = await portkey.chat.completions.create({ messages: [{ role: 'user', content: 'Say this is a test' }], model: 'gpt-4, }); console.log(chat.choices);

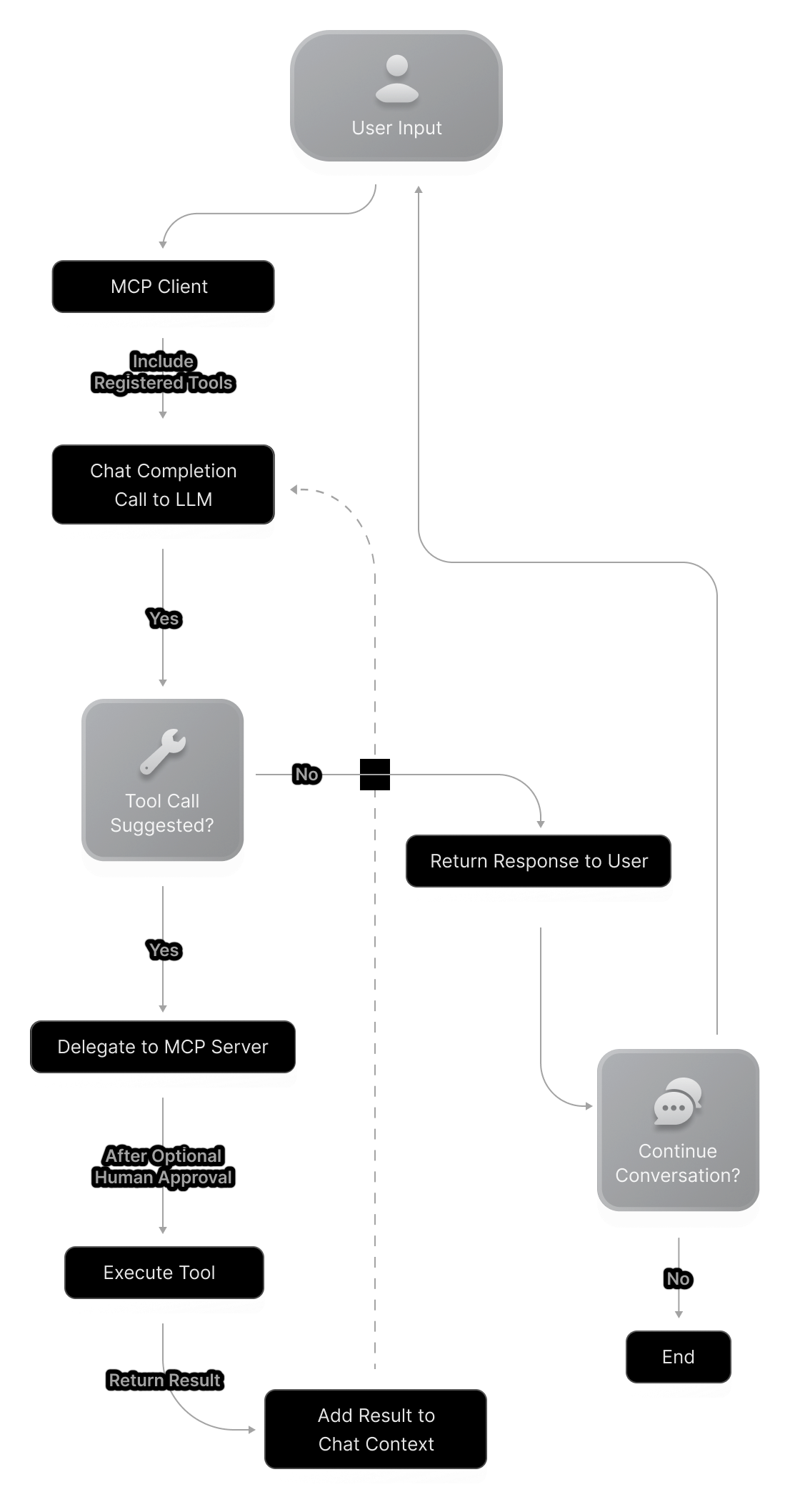

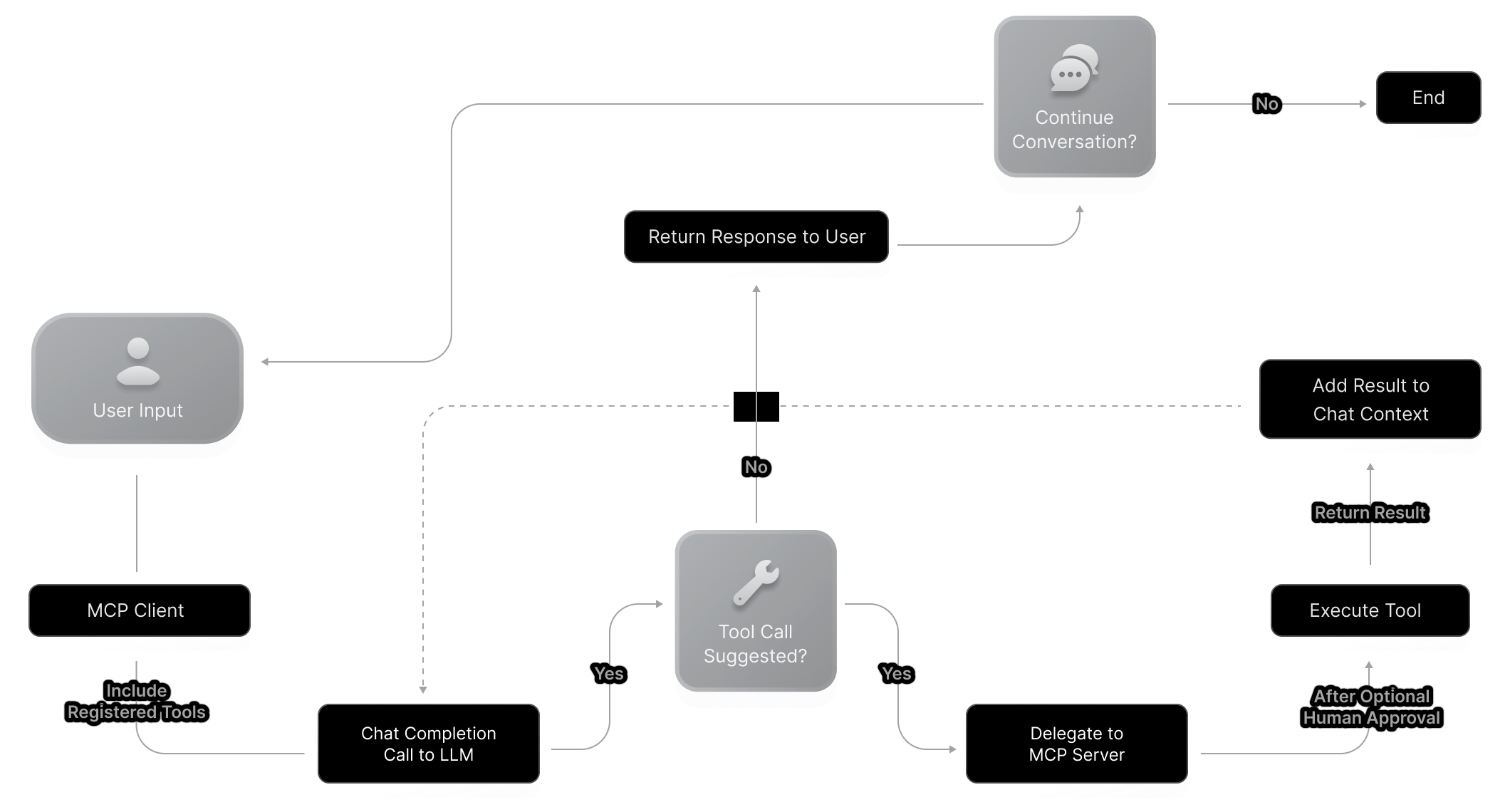

Model Context Protocol

Build AI Agents with Portkey's MCP Client

Build AI Agents with Portkey's MCP Client

Build AI Agents with Portkey's MCP Client

Tool calling shouldn’t slow you down. Portkey’s MCP client simplifies configurations, strengthens integrations, and eliminates ongoing maintenance, so you can focus on building, not fixing.

Tool calling shouldn’t slow you down. Portkey’s MCP client simplifies configurations, strengthens integrations, and eliminates ongoing maintenance, so you can focus on building, not fixing.

Community Driven

Open source with active community development

Community Driven

Open source with active community development

Community Driven

Open source with active community development

Community Driven

Open source with active community development

Enterprise Ready

Built for production use with security in mind

Enterprise Ready

Built for production use with security in mind

Enterprise Ready

Built for production use with security in mind

Enterprise Ready

Built for production use with security in mind

Future Proof

Evolving standard that grows with the AI ecosystem

Future Proof

Evolving standard that grows with the AI ecosystem

Future Proof

Evolving standard that grows with the AI ecosystem

Future Proof

Evolving standard that grows with the AI ecosystem

Democratized Access

Take the driver's seat

with AI Governance

Take the driver's seat with AI Governance

Take the driver's seat

with AI Governance

Using Portkey, you can build a production-ready platform to manage, observe, and govern GenAI for multiple teams

Using Portkey, you can build a production-ready platform to manage, observe, and govern GenAI for multiple teams

Collaborate while staying secure

Manage your resources with a clear hierarchy to ensure seamless collaboration with Role-Based Access Control (RBAC).

Nexora Org

3L9*********I1A

PERMISSIONS:

Create

Update

Delete

Read

List

Cut costs, not corners

Monitor performance and costs in real time. Set budget limits and optimize resource allocation to reduce AI expenses.

59.99$

59.99$

59.99$

59.99$

"We evaluated multiple tools, but ultimately chose Portkey—and we’re glad we did. The platform has become an essential part of our workflow. Honestly, we can’t imagine working without it anymore!."

"We evaluated multiple tools, but ultimately chose Portkey—and we’re glad we did. The platform has become an essential part of our workflow. Honestly, we can’t imagine working without it anymore!."

"We evaluated multiple tools, but ultimately chose Portkey—and we’re glad we did. The platform has become an essential part of our workflow. Honestly, we can’t imagine working without it anymore!."

Deepanshu Setia

Machine Learning Lead

Keep it secure with PII redaction

Portkey automatically redacts sensitive data from your requests before they are sent to the LLM

••••••••••••

••••••••••••

Stay in control with full visibility

Track every action with detailed activity logs across any resource, making it easy to monitor and investigate incidents.

One of the things I really appreciate about Portkey is how quickly it helps you scale reliability. It also centralizes prompt management and includes built-in observability and metrics, which makes monitoring and optimization much easier.

JD Fiscus

Director of R&D - AI, RVO Health

Single Sign-On

Onboard your teams instantly and have Portkey follow your

governance rules from day 0

Not only do they have the the best tool in the market (and we have looked at many competitors), they have been responsive to feedback since Day 1

Cheif Product Officer - Education

Not only do they have the the best tool in the market (and we have looked at many competitors), they have been responsive to feedback since Day 1

Cheif Product Officer - Education

Not only do they have the the best tool in the market (and we have looked at many competitors), they have been responsive to feedback since Day 1

Cheif Product Officer - Education

Not only do they have the the best tool in the market (and we have looked at many competitors), they have been responsive to feedback since Day 1

Cheif Product Officer - Education

Backed by experts

Recognised by industry leaders

Setting the standard for LLMOps, we're building what the industry counts on.

Faster Launch

With a full-stack ops platform, focus on building your app—fast.

Latency

Cloudflare Workers power our APIs with latencies under 40ms—blazing fast

Commitment

We’ve scaled LLMs for 3+ years—let’s make your app win.

Featured in analyst discussions on emerging AI infrastructure and tooling.

Top-rated on G2 by developers and AI teams building secure, production-grade GenAI applications.

Product hunt for the logo we can use the #2 product of the day - Loved by builders scaling GenAI apps with speed and confidence.

Acknowledged, endorsed, and trusted by leading

industry experts and innovators

Backed by experts

Recognised by industry leaders

Setting the standard for LLMOps, we're building what the industry counts on.

Faster Launch

With a full-stack ops platform, focus on building your app—fast.

Latency

Cloudflare Workers power our APIs with latencies under 40ms—blazing fast

Commitment

We’ve scaled LLMs for 3+ years—let’s make your app win.

Featured in analyst discussions on emerging AI infrastructure and tooling.

Top-rated on G2 by developers and AI teams building secure, production-grade GenAI applications.

Product hunt for the logo we can use the #2 product of the day - Loved by builders scaling GenAI apps with speed and confidence.

Acknowledged, endorsed, and trusted by leading

industry experts and innovators

Backed by experts

Recognised by industry leaders

Setting the standard for LLMOps, we're building what the industry counts on.

Faster Launch

With a full-stack ops platform, focus on building your app—fast.

Latency

Cloudflare Workers power our APIs with latencies under 40ms—blazing fast

Commitment

We’ve scaled LLMs for 3+ years—let’s make your app win.

Featured in analyst discussions on emerging AI infrastructure and tooling.

Top-rated on G2 by developers and AI teams building secure, production-grade GenAI applications.

Product hunt for the logo we can use the #2 product of the day - Loved by builders scaling GenAI apps with speed and confidence.

Acknowledged, endorsed, and trusted by leading

industry experts and innovators

Backed by experts

Recognised by industry leaders

Setting the standard for LLMOps, we're building what the industry counts on.

Faster Launch

With a full-stack ops platform, focus on building your app—fast.

Latency

Cloudflare Workers power our APIs with latencies under 40ms—blazing fast

Commitment

We’ve scaled LLMs for 3+ years—let’s make your app win.

Featured in analyst discussions on emerging AI infrastructure and tooling.

Top-rated on G2 by developers and AI teams building secure, production-grade GenAI applications.

Product hunt for the logo we can use the #2 product of the day - Loved by builders scaling GenAI apps with speed and confidence.

Acknowledged, endorsed, and trusted by leading

industry experts and innovators

Trusted by Fortune 500s & Startups

Portkey is easy to set up, and the ability for developers to share credentials with LLMs is great. Overall, it has significantly sped up our development process.

Patrick L,

Founder and CPO, QA.tech

With 30 million policies a month, managing over 25 GenAI use cases became a pain. Portkey helped with prompt management, tracking costs per use case, and ensuring our keys were used correctly. It gave us the visibility we needed into our AI operations.

Prateek Jogani,

CTO, Qoala

Portkey stood out among AI Gateways we evaluated for several reasons: excellent, dedicated support even during the proof of concept phase, easy-to-use APIs that reduce time spent adapting code for different models, and detailed observability features that give deep insights into traces, errors, and caching

AI Leader,

Fortune 500 Pharma Company

Portkey is a no-brainer for anyone using AI in their GitHub workflows. It has saved us thousands of dollars by caching tests that don't require reruns, all while maintaining a robust testing and merge platform. This prevents merging PRs that could degrade production performance. Portkey is the best caching solution for our needs.

Kiran Prasad,

Senior ML Engineer, Ario

Well done on creating such an easy-to-use and navigate product. It’s much better than other tools we’ve tried, and we saw immediate value after signing up. Having all LLMs in one place and detailed logs has made a huge difference. The logs give us clear insights into latency and help us identify issues much faster. Whether it's model downtime or unexpected outputs, we can now pinpoint the problem and address it immediately. This level of visibility and efficiency has been a game-changer for our operations.

Oras Al-Kubaisi,

CTO, Figg

Used by ⭐️ 16,000+ developers across the world

Trusted by Fortune 500s & Startups

Portkey is easy to set up, and the ability for developers to share credentials with LLMs is great. Overall, it has significantly sped up our development process.

Patrick L,

Founder and CPO, QA.tech

With 30 million policies a month, managing over 25 GenAI use cases became a pain. Portkey helped with prompt management, tracking costs per use case, and ensuring our keys were used correctly. It gave us the visibility we needed into our AI operations.

Prateek Jogani,

CTO, Qoala

Portkey stood out among AI Gateways we evaluated for several reasons: excellent, dedicated support even during the proof of concept phase, easy-to-use APIs that reduce time spent adapting code for different models, and detailed observability features that give deep insights into traces, errors, and caching

AI Leader,

Fortune 500 Pharma Company

Portkey is a no-brainer for anyone using AI in their GitHub workflows. It has saved us thousands of dollars by caching tests that don't require reruns, all while maintaining a robust testing and merge platform. This prevents merging PRs that could degrade production performance. Portkey is the best caching solution for our needs.

Kiran Prasad,

Senior ML Engineer, Ario

Well done on creating such an easy-to-use and navigate product. It’s much better than other tools we’ve tried, and we saw immediate value after signing up. Having all LLMs in one place and detailed logs has made a huge difference. The logs give us clear insights into latency and help us identify issues much faster. Whether it's model downtime or unexpected outputs, we can now pinpoint the problem and address it immediately. This level of visibility and efficiency has been a game-changer for our operations.

Oras Al-Kubaisi,

CTO, Figg

Used by ⭐️ 16,000+ developers across the world

Trusted by Fortune 500s & Startups

Portkey is easy to set up, and the ability for developers to share credentials with LLMs is great. Overall, it has significantly sped up our development process.

Patrick L,

Founder and CPO, QA.tech

With 30 million policies a month, managing over 25 GenAI use cases became a pain. Portkey helped with prompt management, tracking costs per use case, and ensuring our keys were used correctly. It gave us the visibility we needed into our AI operations.

Prateek Jogani,

CTO, Qoala

Portkey stood out among AI Gateways we evaluated for several reasons: excellent, dedicated support even during the proof of concept phase, easy-to-use APIs that reduce time spent adapting code for different models, and detailed observability features that give deep insights into traces, errors, and caching

AI Leader,

Fortune 500 Pharma Company

Portkey is a no-brainer for anyone using AI in their GitHub workflows. It has saved us thousands of dollars by caching tests that don't require reruns, all while maintaining a robust testing and merge platform. This prevents merging PRs that could degrade production performance. Portkey is the best caching solution for our needs.

Kiran Prasad,

Senior ML Engineer, Ario

Well done on creating such an easy-to-use and navigate product. It’s much better than other tools we’ve tried, and we saw immediate value after signing up. Having all LLMs in one place and detailed logs has made a huge difference. The logs give us clear insights into latency and help us identify issues much faster. Whether it's model downtime or unexpected outputs, we can now pinpoint the problem and address it immediately. This level of visibility and efficiency has been a game-changer for our operations.

Oras Al-Kubaisi,

CTO, Figg

Used by ⭐️ 16,000+ developers across the world

Trusted by Fortune 500s

& Startups

Portkey is easy to set up, and the ability for developers to share credentials with LLMs is great. Overall, it has significantly sped up our development process.

Patrick L,

Founder and CPO, QA.tech

With 30 million policies a month, managing over 25 GenAI use cases became a pain. Portkey helped with prompt management, tracking costs per use case, and ensuring our keys were used correctly. It gave us the visibility we needed into our AI operations.

Prateek Jogani,

CTO, Qoala

Portkey stood out among AI Gateways we evaluated for several reasons: excellent, dedicated support even during the proof of concept phase, easy-to-use APIs that reduce time spent adapting code for different models, and detailed observability features that give deep insights into traces, errors, and caching

AI Leader,

Fortune 500 Pharma Company

Portkey is a no-brainer for anyone using AI in their GitHub workflows. It has saved us thousands of dollars by caching tests that don't require reruns, all while maintaining a robust testing and merge platform. This prevents merging PRs that could degrade production performance. Portkey is the best caching solution for our needs.

Kiran Prasad,

Senior ML Engineer, Ario

Well done on creating such an easy-to-use and navigate product. It’s much better than other tools we’ve tried, and we saw immediate value after signing up. Having all LLMs in one place and detailed logs has made a huge difference. The logs give us clear insights into latency and help us identify issues much faster. Whether it's model downtime or unexpected outputs, we can now pinpoint the problem and address it immediately. This level of visibility and efficiency has been a game-changer for our operations.

Oras Al-Kubaisi,

CTO, Figg

Used by ⭐️ 16,000+ developers across the world

Latest guides and resources

The enterprise AI blueprint you need now

The Gen AI wave isn't just approaching—it's already crashed over every industry...

Portkey+MongoDB: The Bridge to Production-Ready AI

Use Portkey's AI Gateway with MongoDB to integrate AI and manage data efficiently.

Bring Your Agents to Production with Portkey

Make your agent workflows production-ready by integrating with

Latest guides and resources

The enterprise AI blueprint you need now

The Gen AI wave isn't just approaching—it's already crashed over every industry...

Portkey+MongoDB: The Bridge to Production-Ready AI

Use Portkey's AI Gateway with MongoDB to integrate AI and manage data efficiently.

Bring Your Agents to Production with Portkey

Make your agent workflows production-ready by integrating with

Latest guides and resources

The enterprise AI blueprint you need now

The Gen AI wave isn't just approaching—it's already crashed over every industry...

Portkey+MongoDB: The Bridge to Production-Ready AI

Use Portkey's AI Gateway with MongoDB to integrate AI and manage data efficiently.

Bring Your Agents to Production with Portkey

Make your agent workflows production-ready by integrating with

Latest guides and resources

The enterprise AI blueprint you need now

The Gen AI wave isn't just approaching—it's already crashed over every industry...

Portkey+MongoDB: The Bridge to Production-Ready AI

Use Portkey's AI Gateway with MongoDB to integrate AI and manage data efficiently.

Bring Your Agents to Production with Portkey

Make your agent workflows production-ready by integrating with

The last AI platform you’ll need

Monitor, optimize, and scale your LLM apps.

The last AI platform you’ll need

Monitor, optimize, and scale your LLM apps.

The last AI platform you’ll need

Monitor, optimize, and scale your LLM apps.

The last AI platform you’ll need

Monitor, optimize, and scale your LLM apps.

Products

© 2024 Portkey, Inc. All rights reserved

HIPAA

COMPLIANT

GDPR

Products

© 2024 Portkey, Inc. All rights reserved

HIPAA

COMPLIANT

GDPR

Products

© 2024 Portkey, Inc. All rights reserved

HIPAA

COMPLIANT

GDPR

Products

© 2024 Portkey, Inc. All rights reserved

HIPAA

COMPLIANT

GDPR