Documentation Index

Fetch the complete documentation index at: https://docs.portkey.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

This feature is available on all Portkey plans. Here’s how to get started:

Python

NodeJS

OpenAI NodeJS

OpenAI Python

cURL

from portkey_ai import AsyncPortkey as Portkey, PORTKEY_GATEWAY_URL

import asyncio

async def main():

client = Portkey(

api_key="PORTKEY_API_KEY",

provider="@PROVIDER",

base_url=PORTKEY_GATEWAY_URL,

)

async with client.beta.realtime.connect(model="gpt-4o-realtime-preview-2024-10-01") as connection: #replace with the model you want to use

await connection.session.update(session={'modalities': ['text']})

await connection.conversation.item.create(

item={

"type": "message",

"role": "user",

"content": [{"type": "input_text", "text": "Say hello!"}],

}

)

await connection.response.create()

async for event in connection:

if event.type == 'response.text.delta':

print(event.delta, flush=True, end="")

elif event.type == 'response.text.done':

print()

elif event.type == "response.done":

break

asyncio.run(main())

// requires `yarn add ws @types/ws`

import OpenAI from 'openai';

import { OpenAIRealtimeWS } from 'openai/beta/realtime/ws';

import { PORTKEY_GATEWAY_URL } from 'portkey-ai';

const openai = new OpenAI({

baseURL: PORTKEY_GATEWAY_URL,

apiKey: "PORTKEY_API_KEY"

});

const rt = new OpenAIRealtimeWS({ model: '@PROVIDER/gpt-4o-realtime-preview-2024-12-17' }, openai);

// access the underlying `ws.WebSocket` instance

rt.socket.on('open', () => {

console.log('Connection opened!');

rt.send({

type: 'session.update',

session: {

modalities: ['text'],

model: 'gpt-4o-realtime-preview',

},

});

rt.send({

type: 'conversation.item.create',

item: {

type: 'message',

role: 'user',

content: [{ type: 'input_text', text: 'Say a couple paragraphs!' }],

},

});

rt.send({ type: 'response.create' });

});

rt.on('error', (err) => {

// in a real world scenario this should be logged somewhere as you

// likely want to continue procesing events regardless of any errors

throw err;

});

rt.on('session.created', (event) => {

console.log('session created!', event.session);

console.log();

});

rt.on('response.text.delta', (event) => process.stdout.write(event.delta));

rt.on('response.text.done', () => console.log());

rt.on('response.done', () => rt.close());

rt.socket.on('close', () => console.log('\nConnection closed!'));

import asyncio

from openai import AsyncOpenAI

from portkey_ai import PORTKEY_GATEWAY_URL

async def main():

client = AsyncOpenAI(

base_url=PORTKEY_GATEWAY_URL,

api_key="PORTKEY_API_KEY"

)

async with client.beta.realtime.connect(model="@PROVIDER/gpt-4o-realtime-preview") as connection: #replace with the model you want to use

await connection.session.update(session={'modalities': ['text']})

await connection.conversation.item.create(

item={

"type": "message",

"role": "user",

"content": [{"type": "input_text", "text": "Say hello!"}],

}

)

await connection.response.create()

async for event in connection:

if event.type == 'response.text.delta':

print(event.delta, flush=True, end="")

elif event.type == 'response.text.done':

print()

elif event.type == "response.done":

break

asyncio.run(main())

# we're using websocat for this example, but you can use any websocket client

websocat "wss://api.portkey.ai/v1/realtime?model=gpt-4o-realtime-preview-2024-10-01" \

-H "x-portkey-provider: openai" \

-H "x-portkey-provider: PROVIDER" \

-H "x-portkey-OpenAI-Beta: realtime=v1" \

-H "x-portkey-api-key: PORTKEY_API_KEY"

# once connected, you can send your messages as you would with OpenAI's Realtime API

Authorization header.

Fire Away!

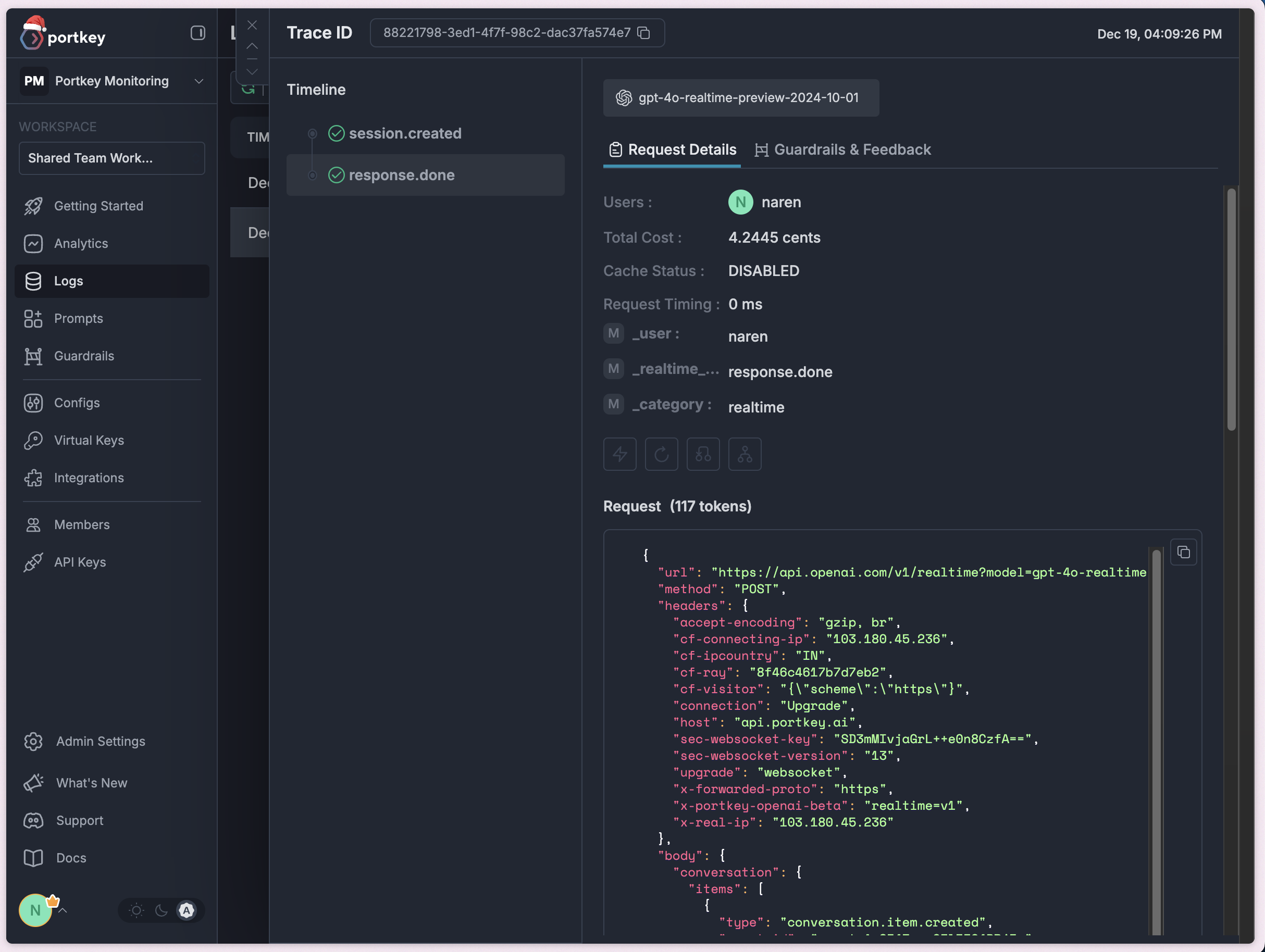

You can see your logs in realtime with neatly visualized traces and cost tracking.

Next Steps