Use Portkey with your Vercel app for:Documentation Index

Fetch the complete documentation index at: https://docs.portkey.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

- Calling 1,600+ LLMs (open & closed)

- Logging & analysing LLM usage

- Caching responses

- Automating fallbacks, retries, timeouts, and load balancing

- Managing, versioning, and deploying prompts

- Continuously improving app with user feedback

Guide: Create a Portkey + OpenAI Chatbot

1. Create a NextJS app

Go ahead and create a Next.js application, and installai and portkey-ai as dependencies.

2. Add Authentication keys to .env

- Login to Portkey here

- Go to Model Catalog → Add Provider and add your OpenAI credentials

- Name your provider (e.g.,

openai-prod) - this becomes your provider slug@openai-prod - Grab your Portkey API key and add it to

.envfile:

.env

3. Create Route Handler

Create a Next.js Route Handler that utilizes the Edge Runtime to generate a chat completion. Stream back to Next.js. For this example, create a route handler atapp/api/chat/route.ts that calls GPT-4 and accepts a POST request with a messages array of strings:

app/api/chat/route.ts

gpt-3.5-turbo model, and the response will be streamed to your Next.js app.

4. Switch from OpenAI to Anthropic

Portkey is powered by an open-source, universal AI Gateway with which you can route to 1,600+ LLMs using the same, known OpenAI spec. Let’s see how you can switch from GPT-4 to Claude-3-Opus by updating 2 lines of code (without breaking anything else).- Add your Anthropic credentials in Model Catalog → Add Provider (name it e.g.,

anthropic-prod) - Update the model to use the new provider slug

- Update the model name while making your

/chat/completionscall - Add maxTokens field inside streamText invocation (Anthropic requires this field)

5. Switch to Gemini 1.5

Similarly, add your Google AI Studio API key in Model Catalog → Add Provider (name it e.g.,google-prod) and call Gemini:

6. Wire up the UI

Let’s create a Client component that will have a form to collect the prompt from the user and stream back the completion. TheuseChat hook will default use the POST Route Handler we created earlier (/api/chat). However, you can override this default value by passing an api prop to useChat({ api: '...'}).

app/page.tsx

7. Log the Requests

Portkey logs all the requests you’re sending to help you debug errors, and get request-level + aggregate insights on costs, latency, errors, and more. You can enhance the logging by tracing certain requests, passing custom metadata or user feedback.

{"key":"value"} pairs inside the metadata header. Portkey segments the requests based on the metadata to give you granular insights.

Guide: Handle OpenAI Failures

1. Solve 5xx, 4xx Errors

Portkey helps you automatically trigger a call to any other LLM/provider in case of primary failures.Create a fallback logic with Portkey’s Gateway Config. For example, for setting up a fallback from OpenAI to Anthropic, the Gateway Config would be:Config

2. Apply Config to the Route Handler

3. Handle Rate Limit Errors

You can loadbalance your requests against multiple LLMs or accounts and prevent any one account from hitting rate limit thresholds. For example, to route your requests between 1 OpenAI and 2 Azure OpenAI accounts:Config

Guide: Cache Semantically Similar Requests

Portkey can save LLM costs & reduce latencies 20x by storing responses for semantically similar queries and serving them from cache. For Q&A use cases, cache hit rates go as high as 50%. To enable semantic caching, just set thecache mode to semantic in your Gateway Config:

max-age of the cache and force refresh a cache. See the docs for more information.

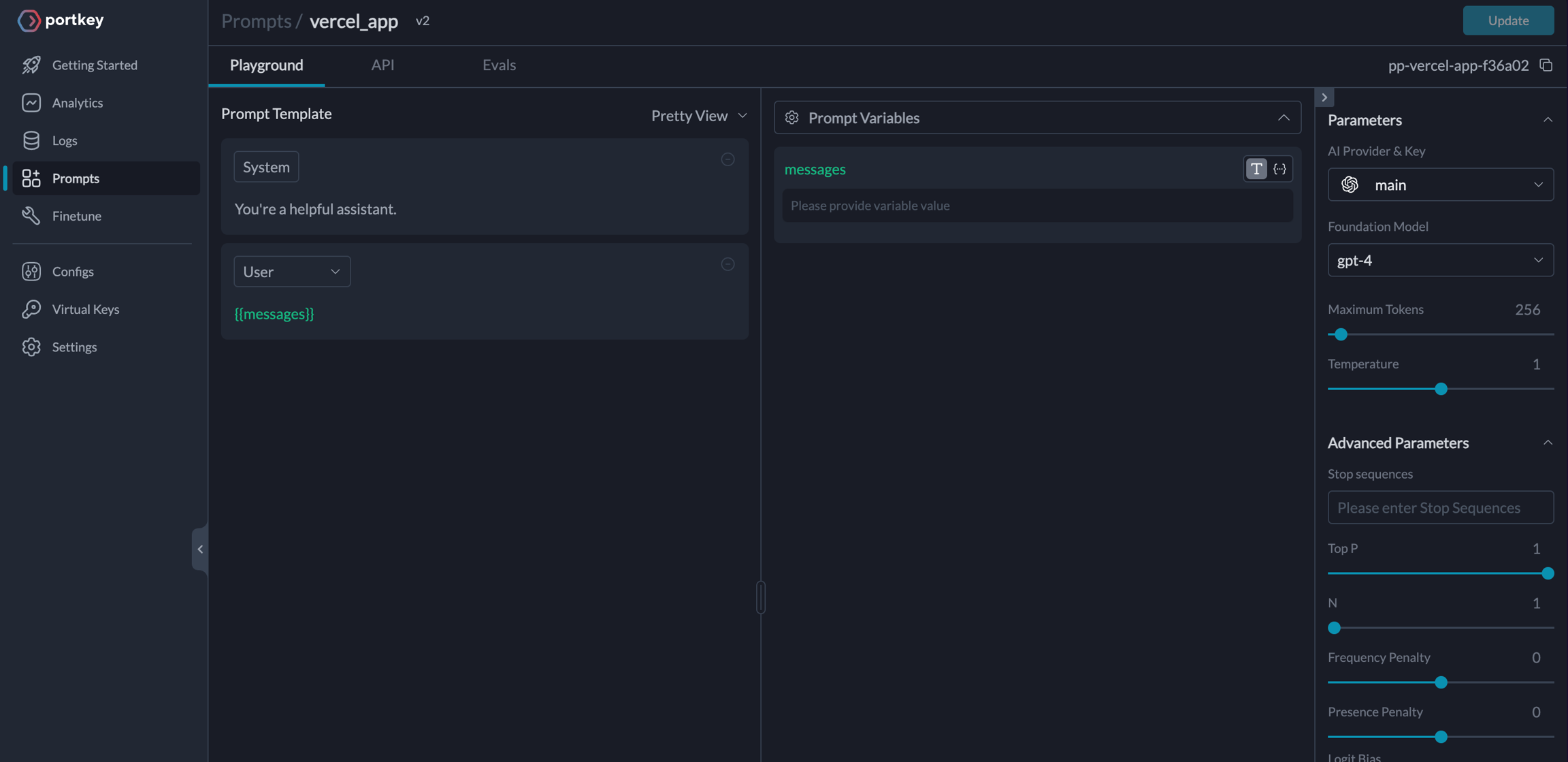

Guide: Manage Prompts Separately

Storing prompt templates and instructions in code is messy. Using Portkey, you can create and manage all of your app’s prompts in a single place and directly hit our prompts API to get responses. Here’s more on what Prompts on Portkey can do. To create a Prompt Template,- From the Dashboard, Open Prompts

- In the Prompts page, Click Create

- Add your instructions, variables, and You can modify model parameters and click Save

Trigger the Prompt in the Route Handler

JavaScript