With Portkey, you can take advantage of features like fast AI gateway access, observability, prompt management, and more, all while ensuring the secure management of your LLM API keys through Model Catalog.Documentation Index

Fetch the complete documentation index at: https://docs.portkey.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

Portkey SDK Integration with Azure OpenAI

Portkey provides a consistent API to interact with models from various providers. To integrate Azure OpenAI with Portkey:Creating Your Azure OpenAI Integration

This integration is for all OpenAI models deployed on either Azure OpenAI or Azure AI Foundry.

Prerequisites

Before creating your integration, you’ll need:- An active Azure account

- Access to your Azure portal

- A model deployment on Azure (e.g., GPT-4, GPT-4o-mini)

Step 1: Start Creating Your Integration

Navigate to the Integrations page in your Portkey dashboard and select Azure OpenAI as your provider.

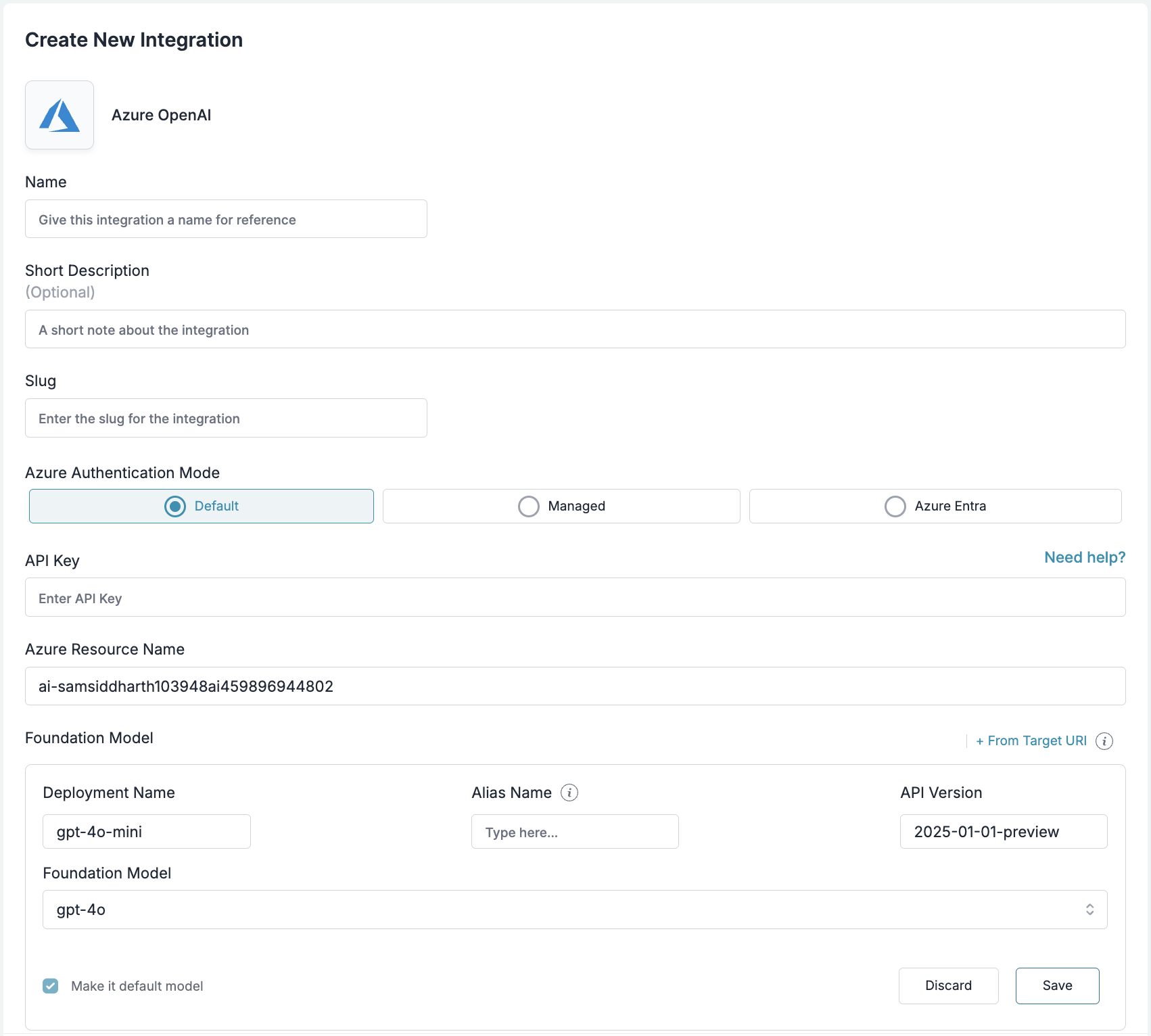

Step 2: Configure Integration Details

Fill in the basic information for your integration:- Name: A descriptive name for this integration (e.g., “Azure OpenAI Production”)

- Short Description: Optional context about this integration’s purpose

- Slug: A unique identifier used in API calls (e.g., “azure-openai-prod”)

Step 3: Set Up Authentication

Portkey supports three authentication methods for Azure OpenAI. For most use cases, we recommend using the Default (API Key) method.

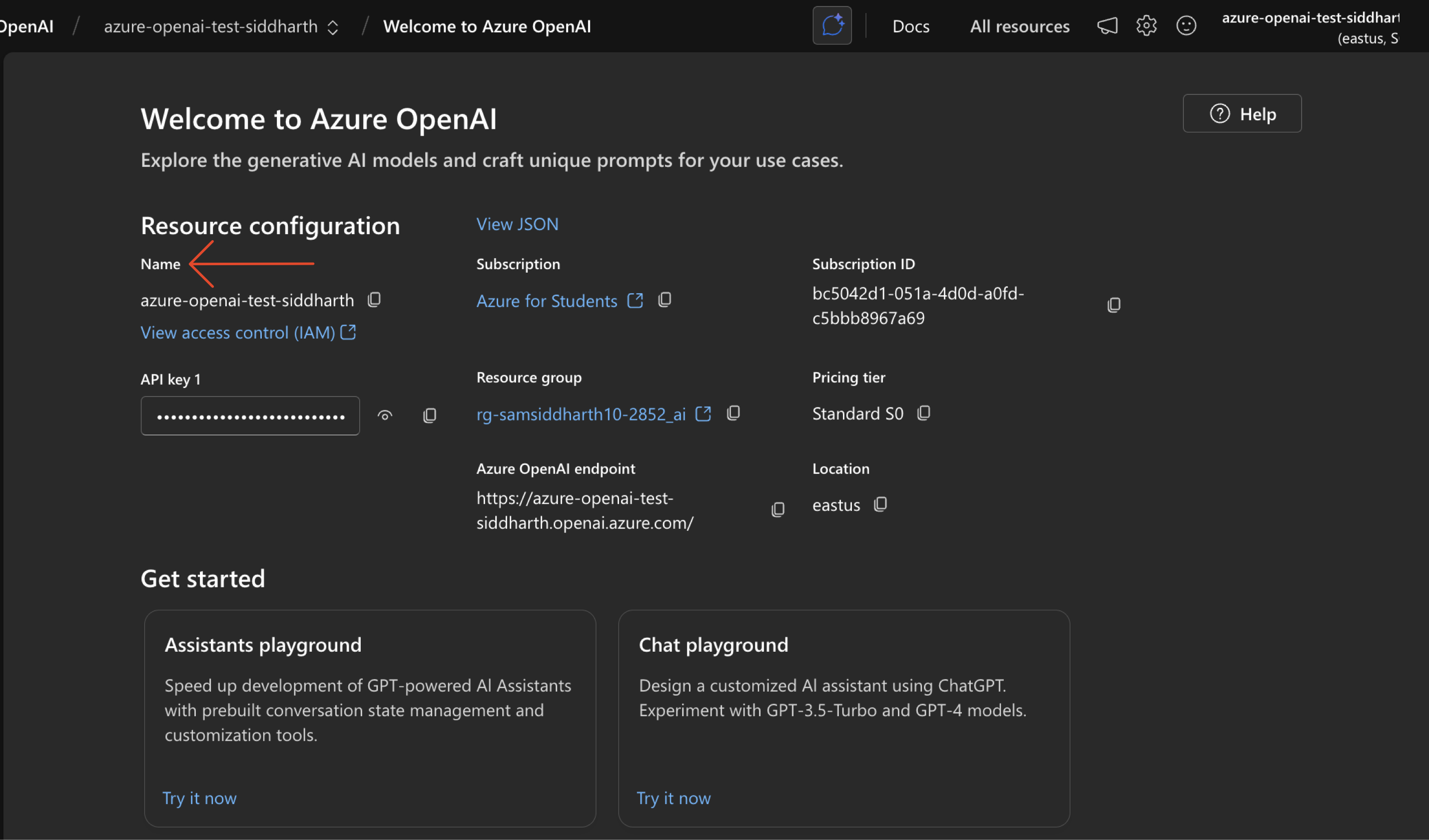

Gather Your Azure Credentials

From your Azure portal, you’ll need to collect:

Enter Credentials in Portkey

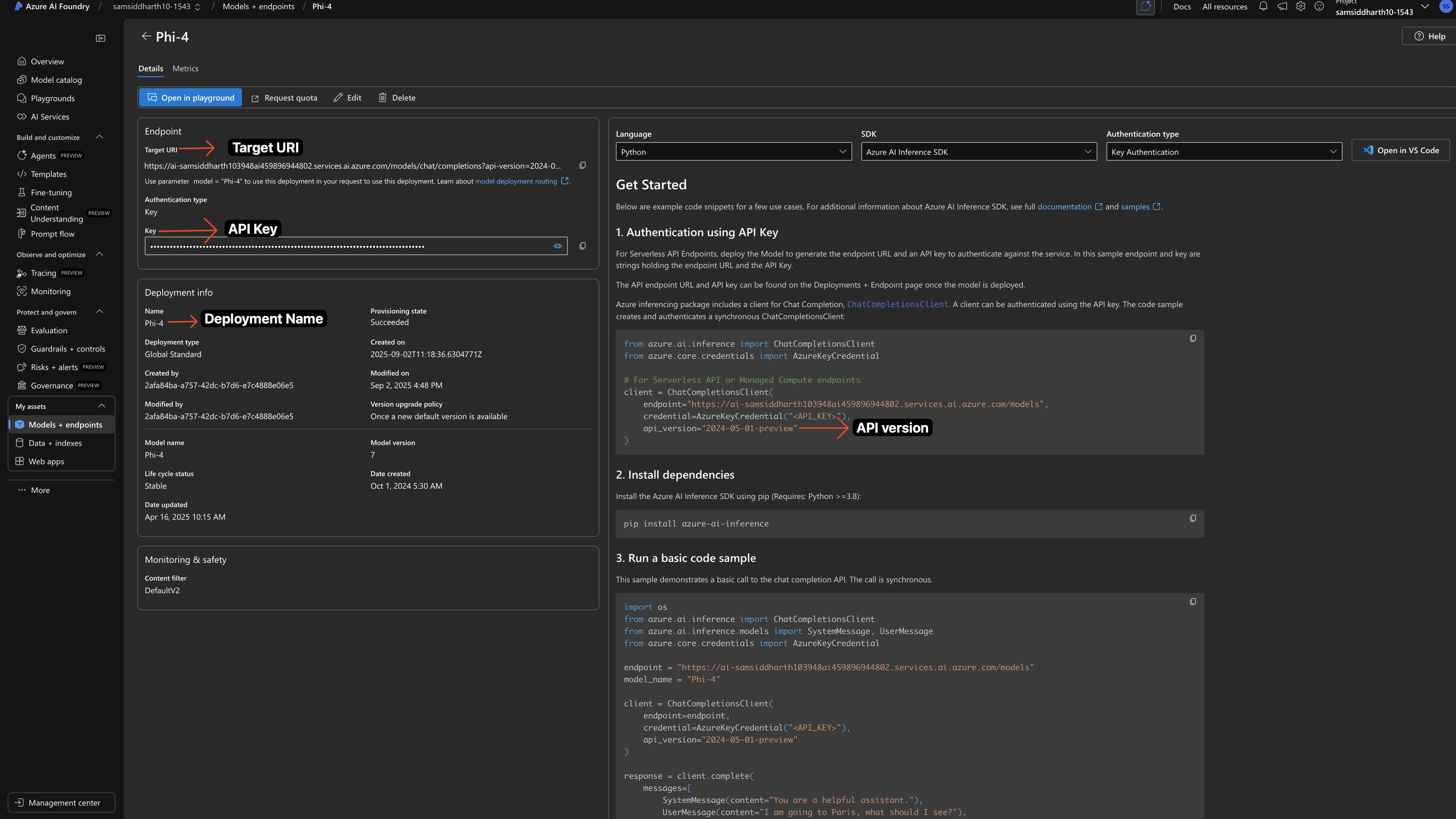

- Navigate to your model deployment in Azure

- Click on the deployment to view details

- Copy the API Key from the authentication section

We recommend importing your Azure details (resource name, deployment details, API version) directly from your Target URI. Simply copy the target URL and import it.

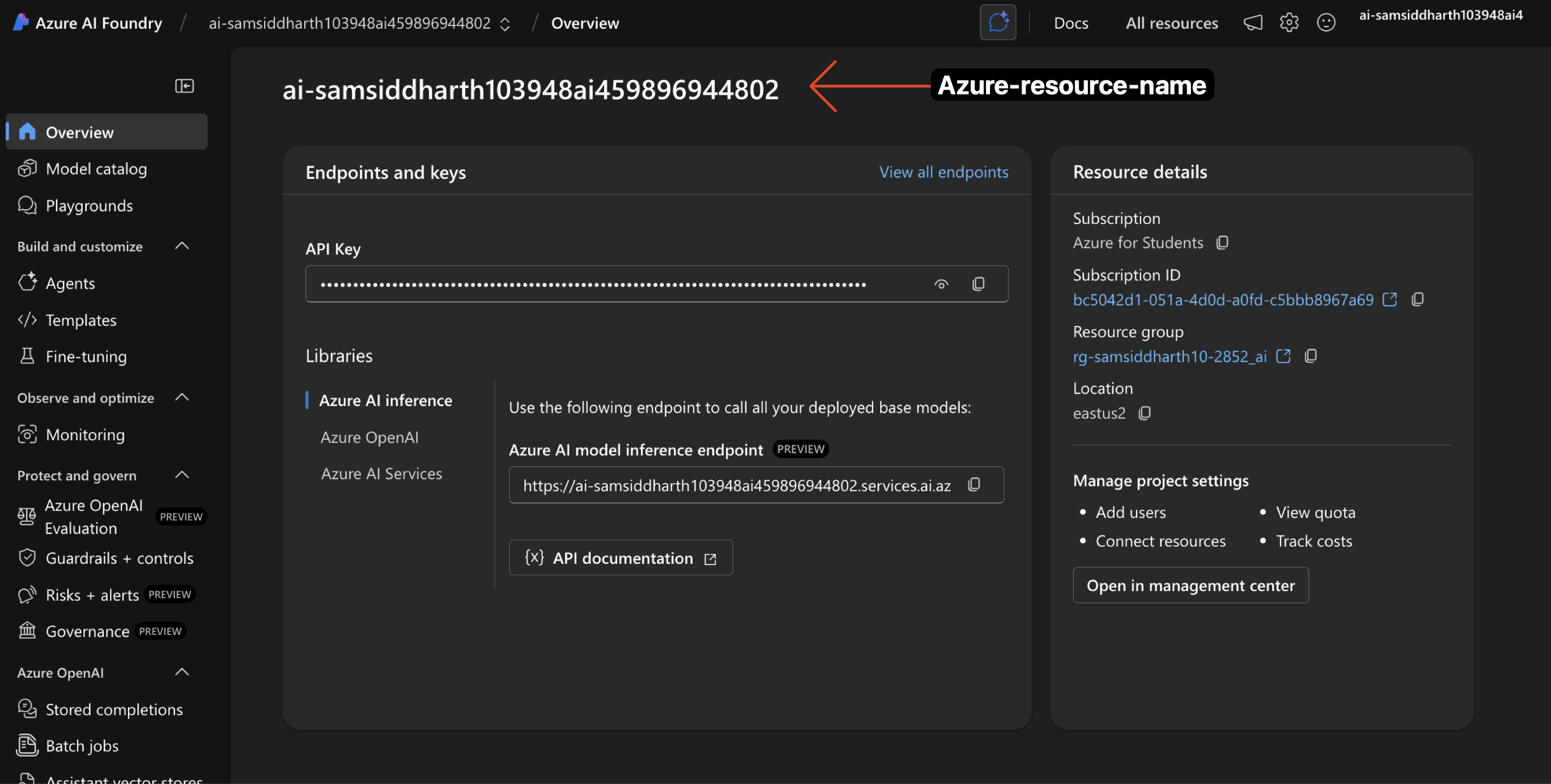

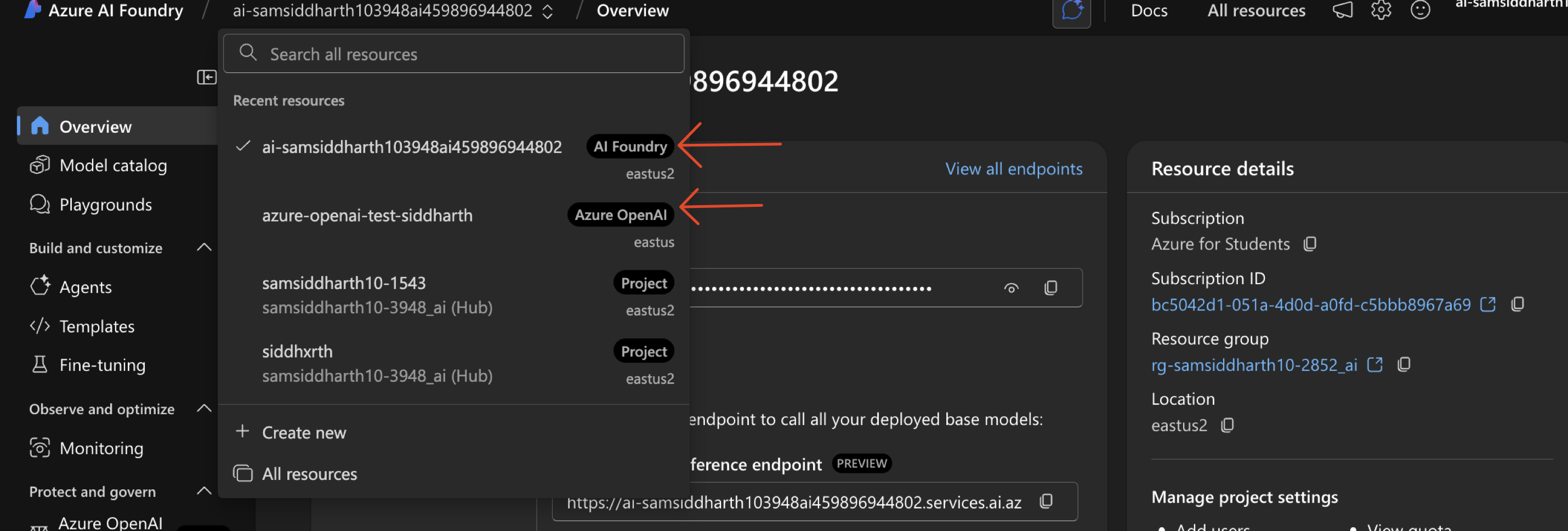

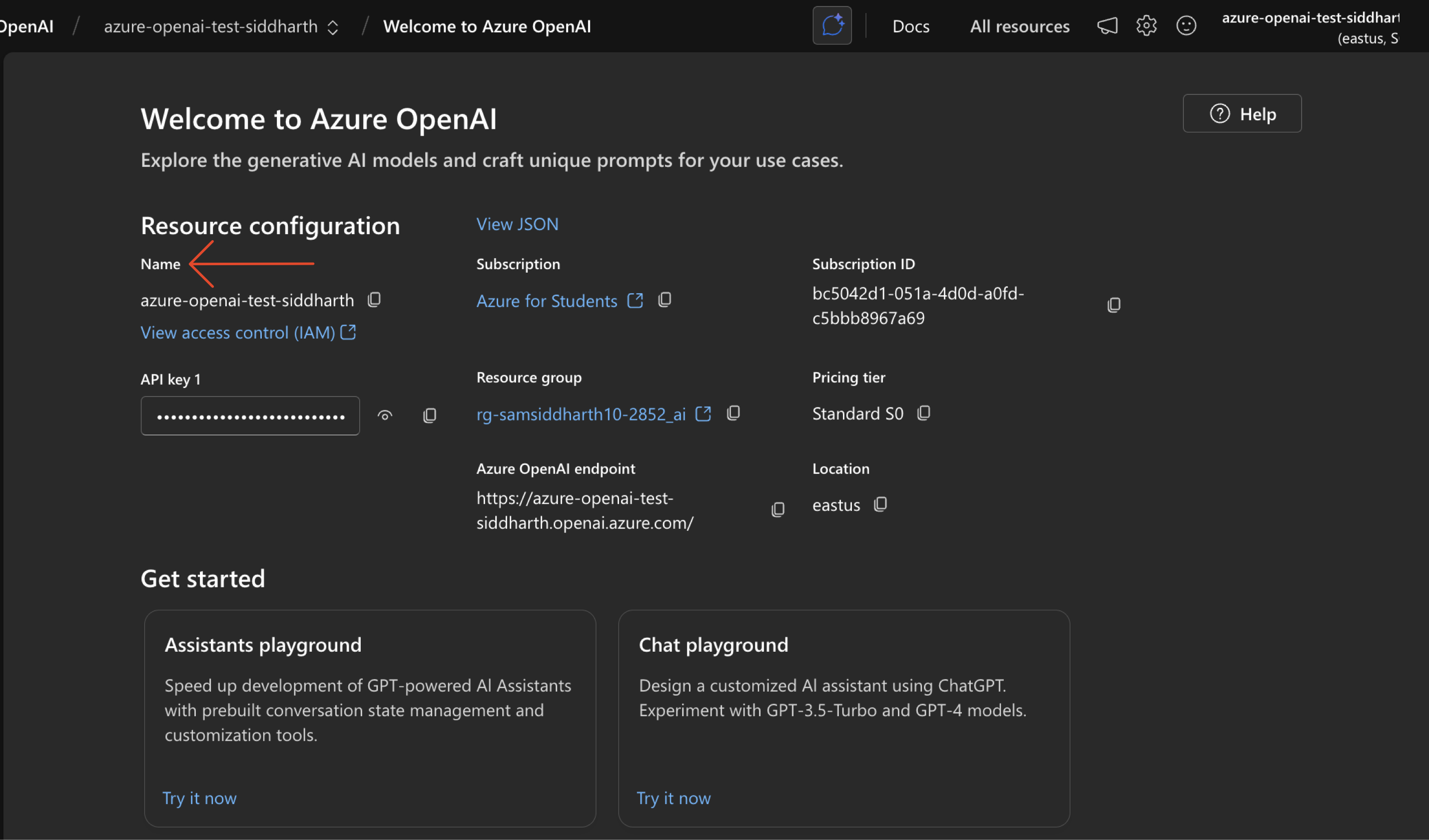

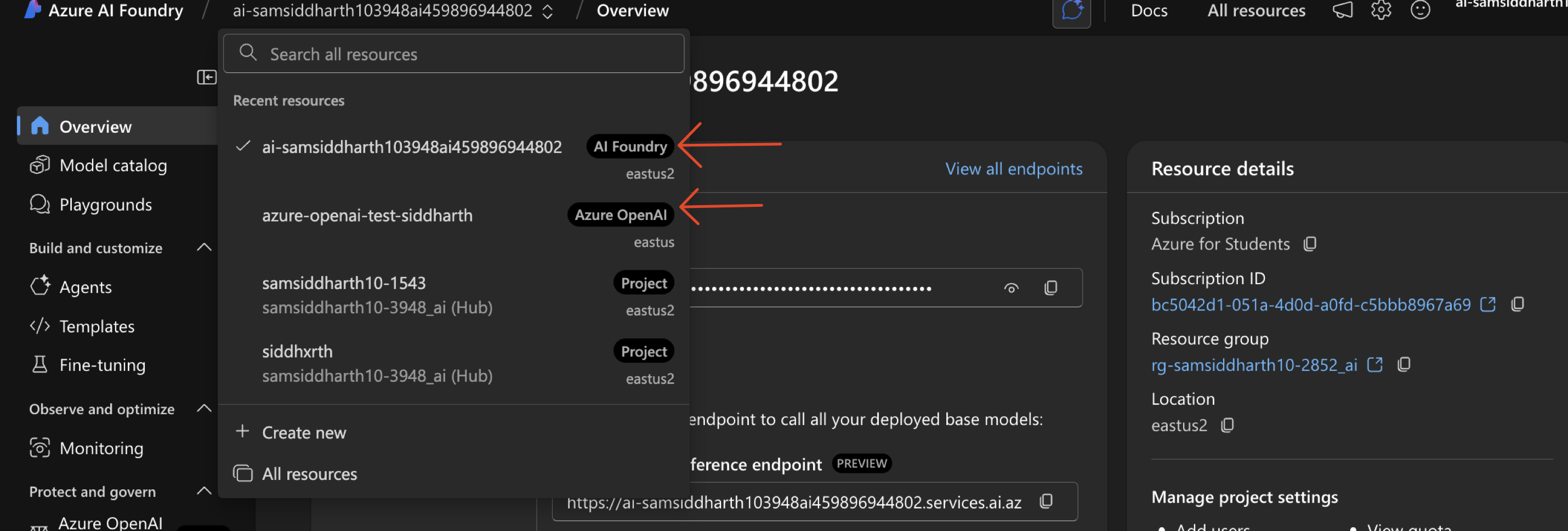

- Azure Resource Name: Get Your resource Name from Azure

Find Your Azure Resource Name

Find Your Azure Resource Name

Your Azure resource Name is different from your Project Name. Here’s how you can find it:

- Azure AI Foundry

- Azure OpenAI

- Note the API Version and enter it in the given field

- Alias Name: A Portkey-specific field for accessing the model - name it as you prefer

- Foundation Model: Select a foundation model from the list that matches your deployment. This helps Portkey track costs and metrics. If your model isn’t listed, choose a similar model type to begin with.

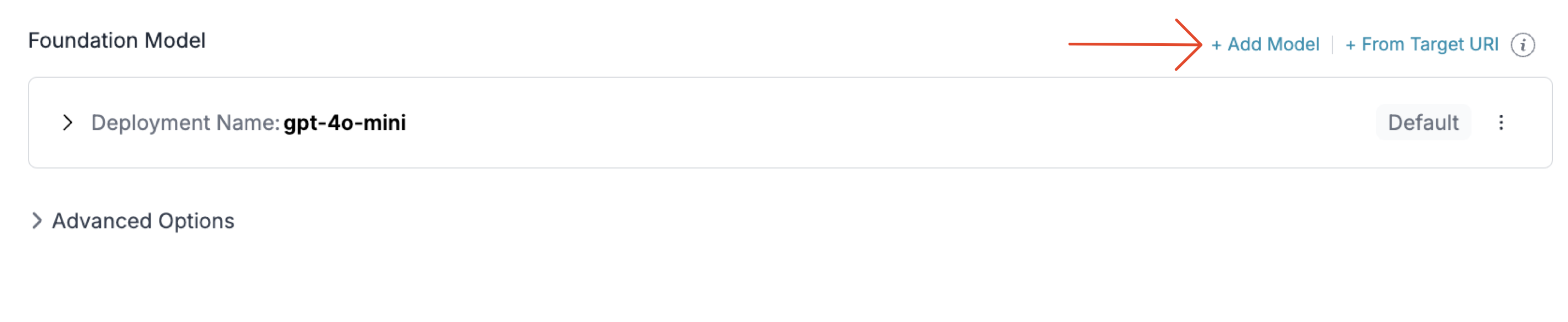

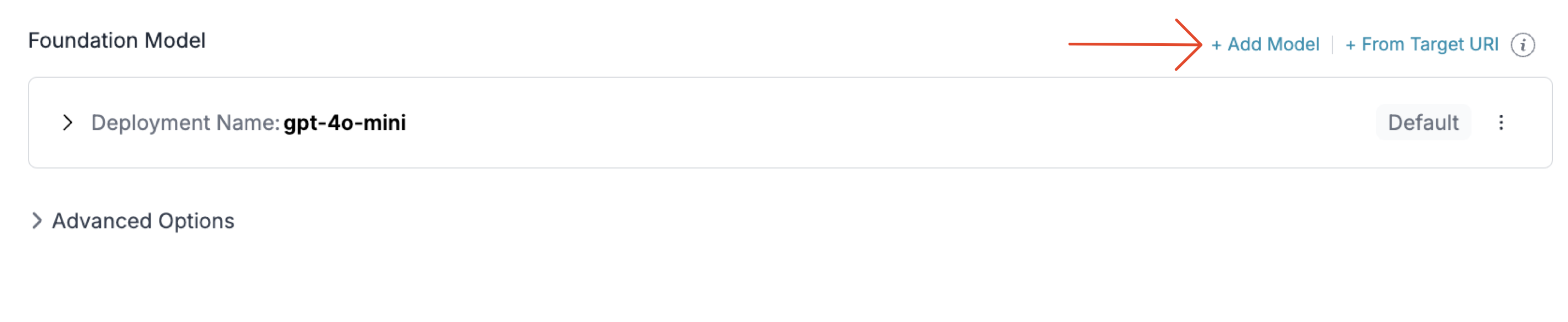

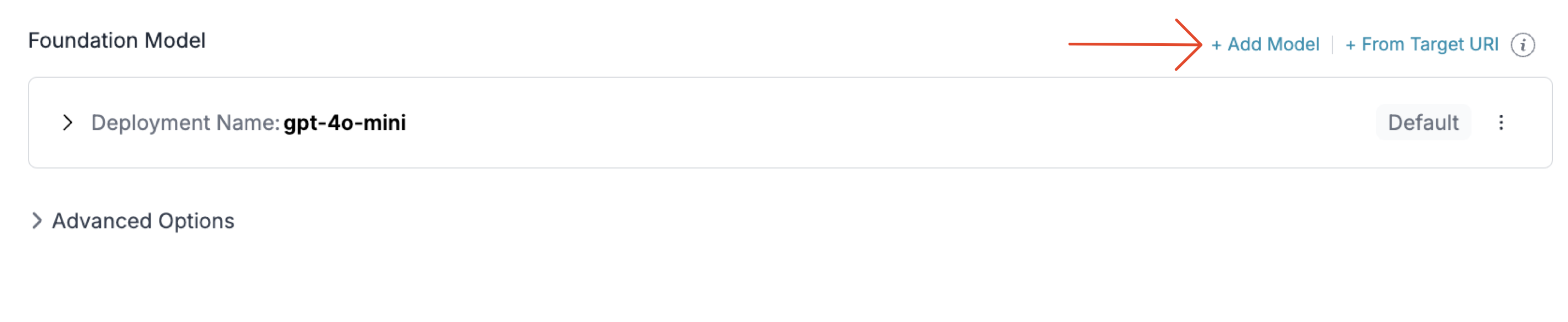

Adding Multiple Models to Your Azure OpenAI Integration

You can deploy multiple models through a single Azure OpenAI integration by adding multiple deployments under the same integration.

1. Install the Portkey SDK

Add the Portkey SDK to your application to interact with Azure OpenAI’s API through Portkey’s gateway.- NodeJS

- Python

2. Initialize Portkey with the Azure

Set up Portkey with your Azure Integration as part of the initialization configuration. You can create a provider for Azure in the Portkey UI.- NodeJS SDK

- Python SDK

3. Invoke Chat Completions with Azure OpenAI

Use the Portkey instance to send requests to your Azure deployments. You can also override the provider slug directly in the API call if needed.- NodeJS SDK

- Python SDK

Managing Azure OpenAI Prompts

You can manage all prompts to Azure OpenAI in the Prompt Library. All the current models of OpenAI are supported and you can easily start testing different prompts. Once you’re ready with your prompt, you can use theportkey.prompts.completions.create interface to use the prompt in your application.

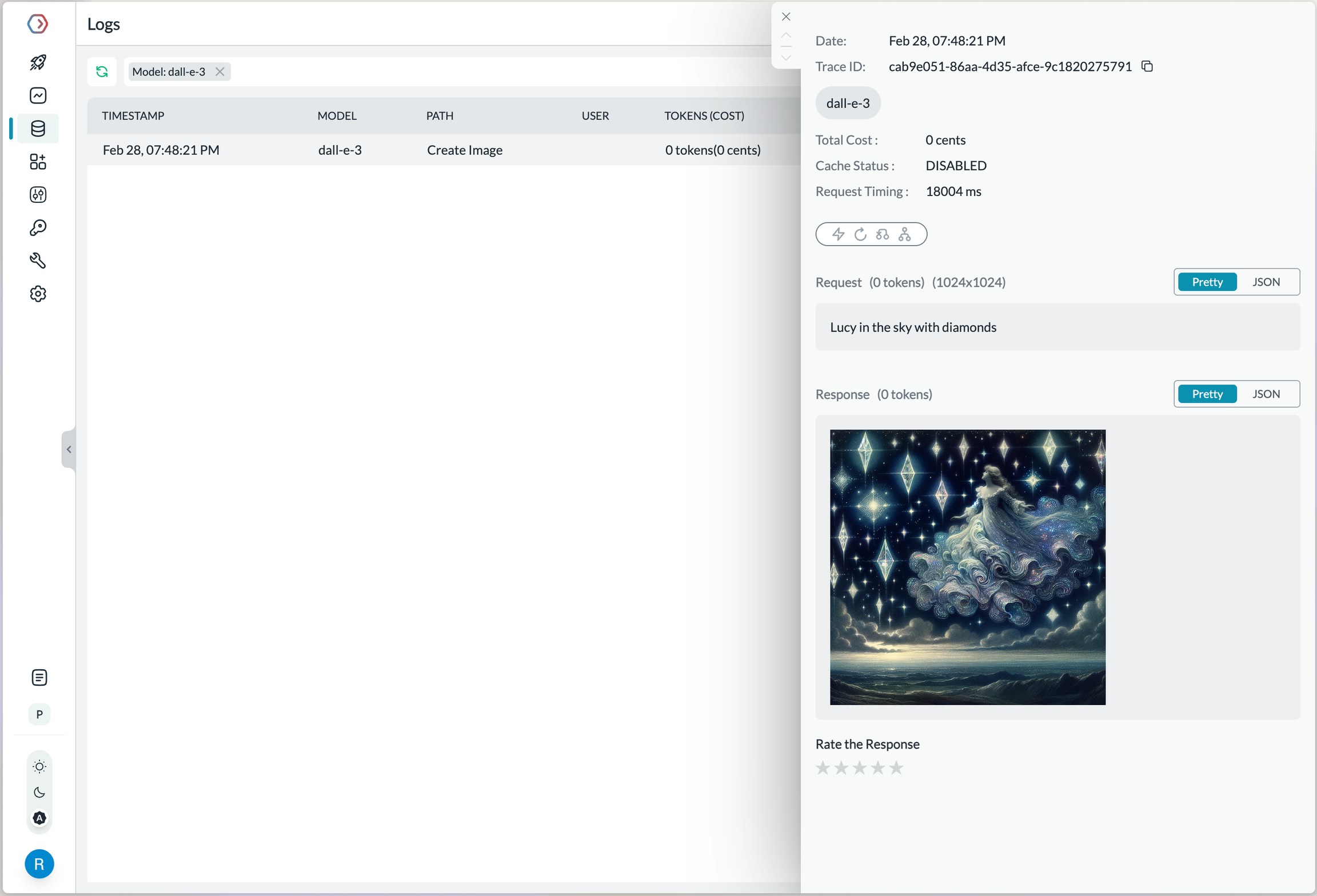

Image Generation

Portkey supports multiple modalities for Azure OpenAI and you can make image generation requests through Portkey’s AI Gateway the same way as making completion calls.- Portkey NodeJS

- Portkey Python

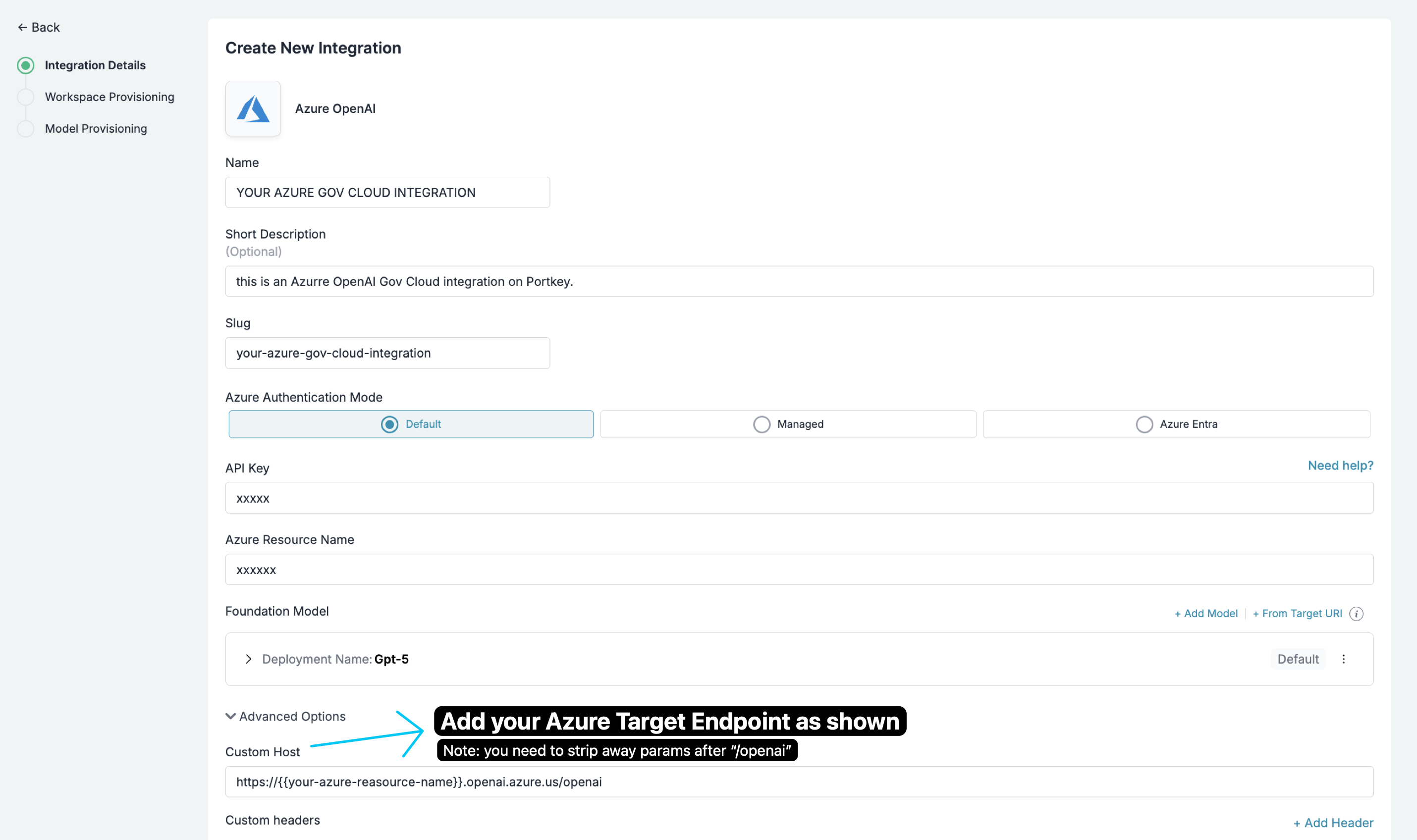

Azure Government Cloud

Integration is identical to global Azure OpenAI. Set a Custom Host that points to Azure Government and ensure the path ends with

/openai (remove any params after /openai).Steps

- In Portkey, create or edit your Azure OpenAI provider.

- Open “Advanced Options”.

- Set “Custom Host” to:

You need to set the Custom Host to the Azure Government endpoint and ensure the path ends with the first

/openai (remove any params after /openai).- Save and use normally in SDKs and via the gateway.

Making Requests Without Model Catalog

Here’s how you can pass your Azure OpenAI details & secrets directly without using the Model Catalog feature.Key Mapping

In a typical Azure OpenAI request,| Parameter | Node SDK | Python SDK | REST Headers |

|---|---|---|---|

| AZURE RESOURCE NAME | azureResourceName | azure_resource_name | x-portkey-azure-resource-name |

| AZURE DEPLOYMENT NAME | azureDeploymentId | azure_deployment_id | x-portkey-azure-deployment-id |

| API VERSION | azureApiVersion | azure_api_version | x-portkey-azure-api-version |

| AZURE API KEY | Authorization: “Bearer + “ | Authorization = “Bearer + “ | Authorization |

| AZURE MODEL NAME | azureModelName | azure_model_name | x-portkey-azure-model-name |

Example

- Node

- Python

- cURL

How to Pass JWT (JSON Web Tokens)

If you have configured fine-grained access for Azure OpenAI and need to useJSON web token (JWT) in the Authorization header instead of the regular API Key, you can use the forwardHeaders parameter to do this.

- Node

- Python