The Challenge: Anthropic’s “Successful” Failure

When Anthropic’s models are overloaded, they can send anoverloaded_error message. For streaming responses, this error comes in the first chunk of the stream, but the HTTP response code is still 200 OK.

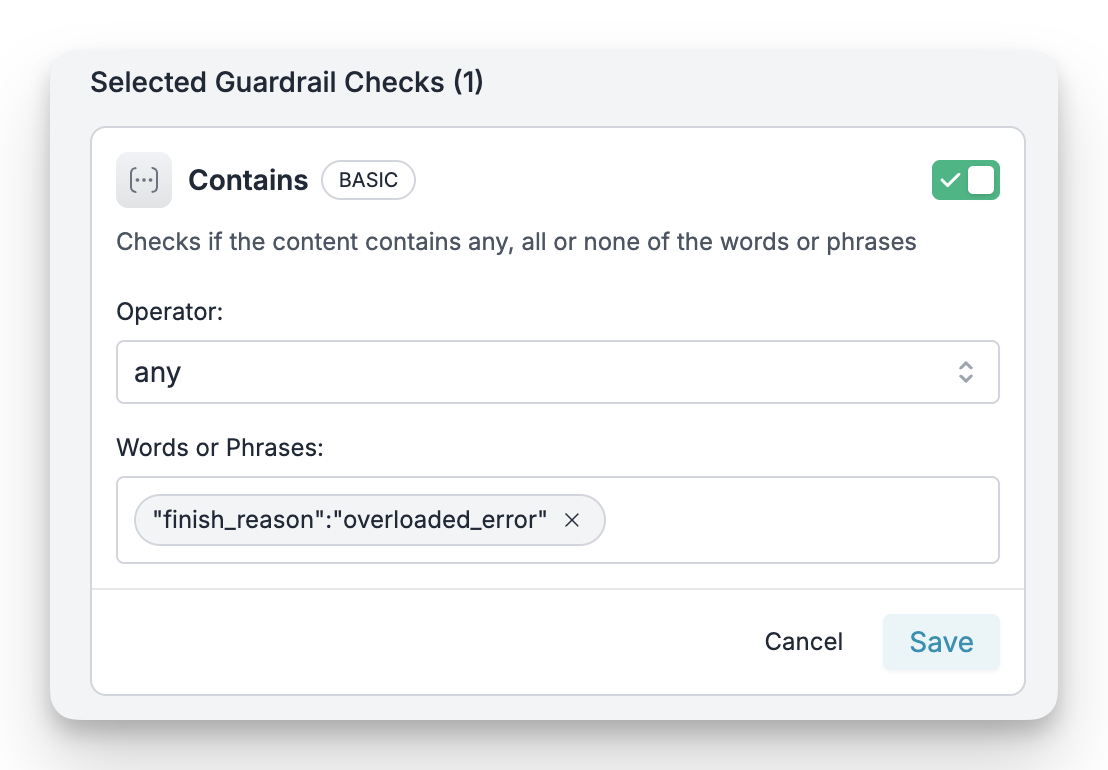

Anthropic overloaded_error response

200, standard fallback logic (like Portkey’s on_status_codes) won’t trigger. Your application might interpret this as a successful but empty response, leading to a poor user experience.

The Strategy: Content-Aware Fallbacks

We’ll use Portkey’s Output Guardrails to inspect the content of the response stream. This allows us to create a rule that looks for the specificoverloaded_error message and triggers a fallback, even when the status code is 200.

Here’s the plan:

- Create an Output Guardrail to detect the

"type":"overloaded_error"string in the response. - Build a Fallback Config that uses this guardrail to trigger a fallback to a different model (like GPT-4o).

- Implement and Verify the solution in your code.

The Recipe: A Step-by-Step Guide

Step 1: Create the Output Guardrail

First, we’ll create a simple guardrail on Portkey that scans the response for the specific error string.- Navigate to the Guardrails page in your Portkey dashboard.

- Click Create Guardrail and configure it with the following settings:

- Guardrail Type:

Contains - Check:

NONE - Words or Phrases:

"type":"overloaded_error"

- Guardrail Type:

Why use

NONE instead of ANY? The operator determines when the guardrail passes or fails:- With

ANY: The guardrail passes when the phrase IS found and fails when it’s NOT found. Since normal responses don’t contain"type":"overloaded_error", it would fail on every request and trigger a fallback unnecessarily. - With

NONE: The guardrail passes when the phrase is NOT found and fails when it IS found. Normal responses pass through, while responses containing the overloaded error trigger the fallback as intended.

anthropic-overload-detector) and save it. Note down its ID (e.g., grl_...), as you’ll need it in the next step.

Step 2: Create the Fallback Config

Now, let’s define the fallback behavior. We’ll create a Portkey Config that links our new guardrail to a fallback strategy.- Navigate to the Configs page and click Create Config.

- Paste the following JSON configuration:

Fallback Config

output_guardrails: Attaches our detector guardrail to this config.strategy: Defines afallbackmode.on_status_codes: [246,446]: This is the key part. When our Output Guardrail detects the error string in a stream, Portkey internally assigns it status446or246based on your settings. This rule tells Portkey to trigger the fallback when that happens.targets: Defines the sequence of models to try. It will first attempt the primary Anthropic model and fall back to the OpenAI model if the guardrail is triggered.

cfg_...).

Step 3: Implement in Your Code

Finally, let’s use this config in our application. Simply pass the Config ID when making your Portkey request.overloaded_error, the guardrail will catch it, and Portkey will automatically retry the request with your fallback model (OpenAI’s GPT-4o), ensuring your application remains operational.

Remember that this approach only works with non-streaming requests. For streaming use cases, consider implementing client-side error detection or using alternative resilience strategies.

Verifying the Fallback

You can confirm the fallback is working by checking your Portkey logs.- Go to the Logs page in your Portkey dashboard.

- You should see two requests for a single incoming call:

- The first request to Anthropic will have a status of

output_guardrail_triggered(Status Code446). - The second request to your fallback provider (OpenAI) will have a status of

success.

- The first request to Anthropic will have a status of